Ubiquitous Pragmatic Trial Impact Analysis: How to Prevent a Year of Death and Suffering for 84 Cents

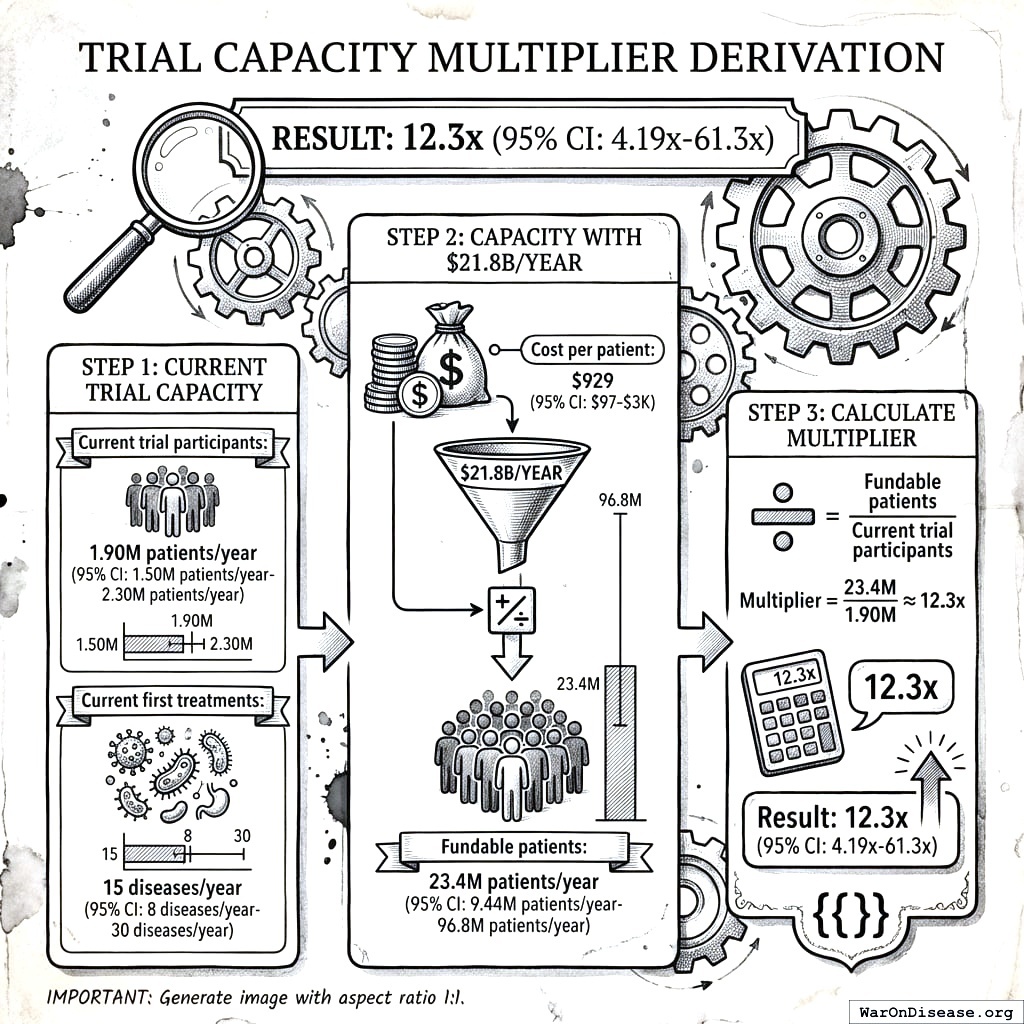

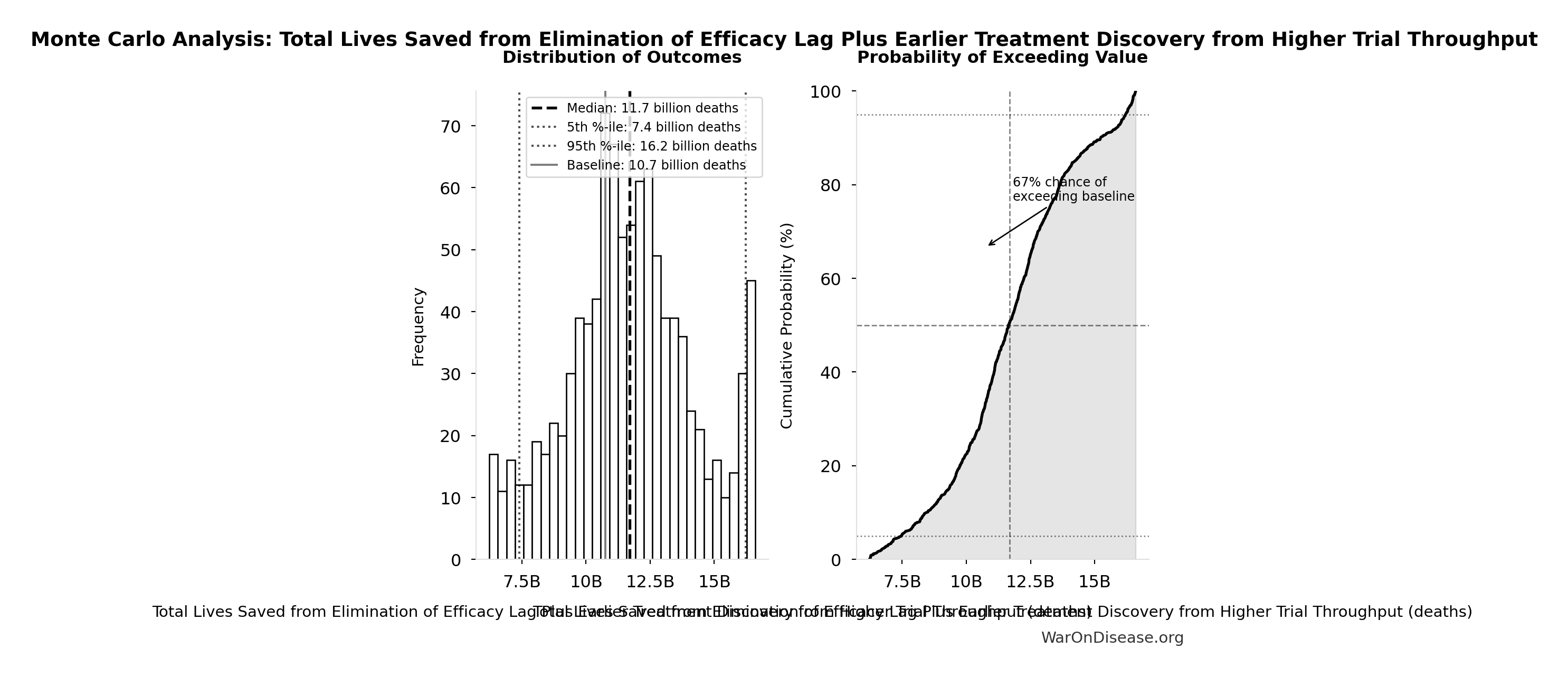

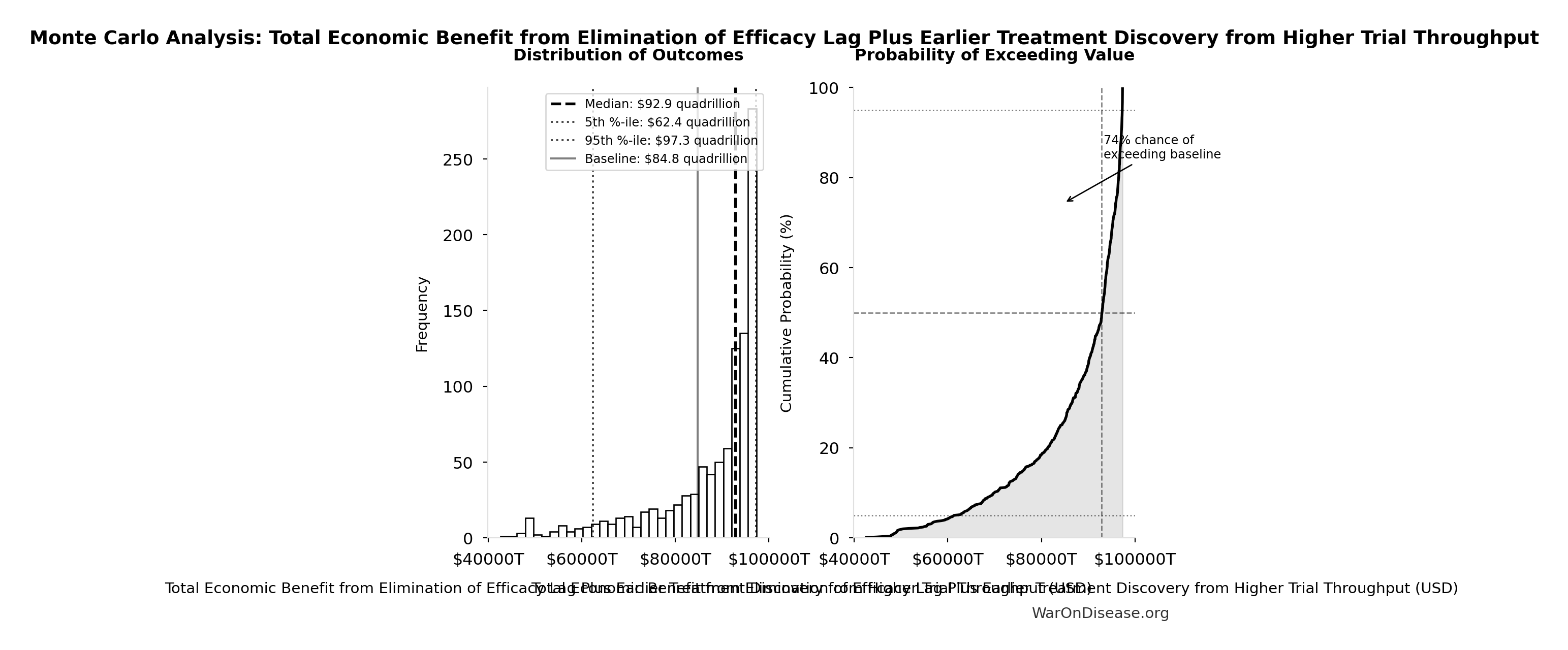

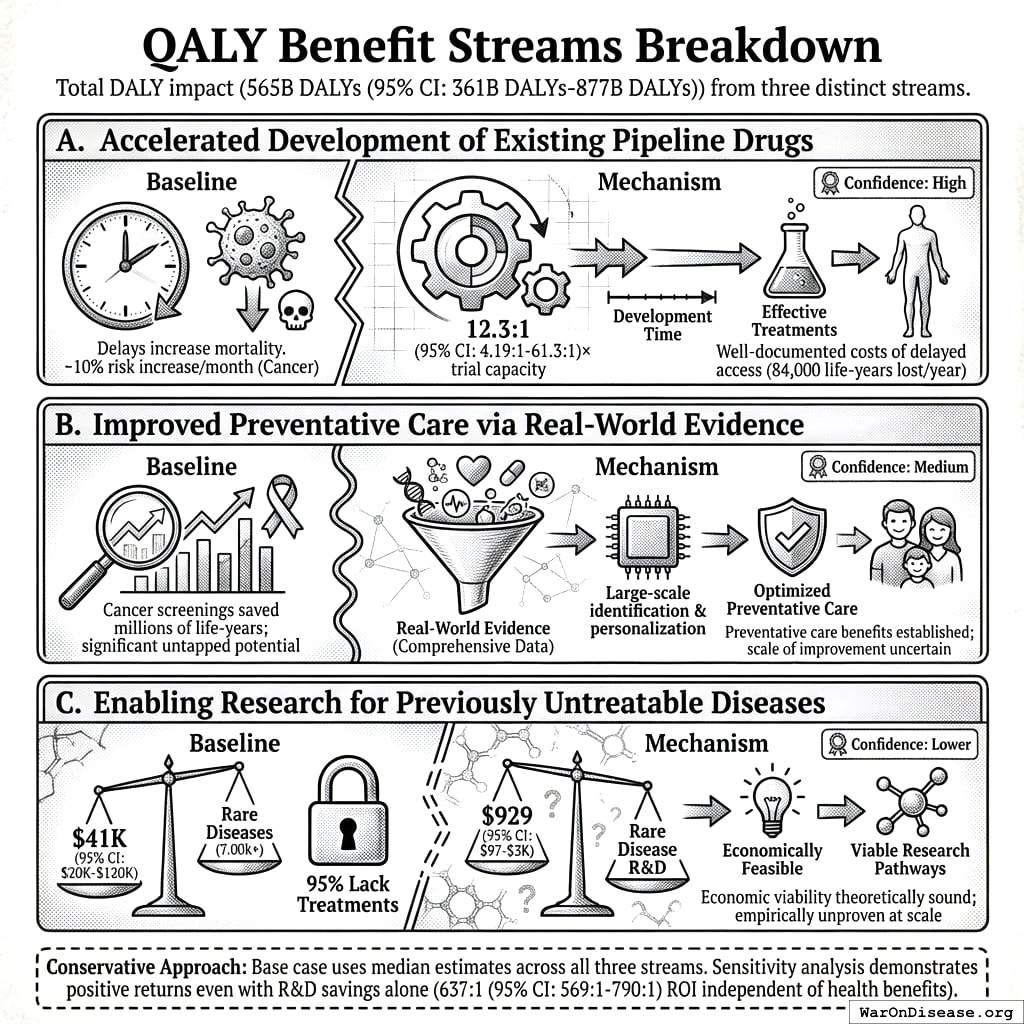

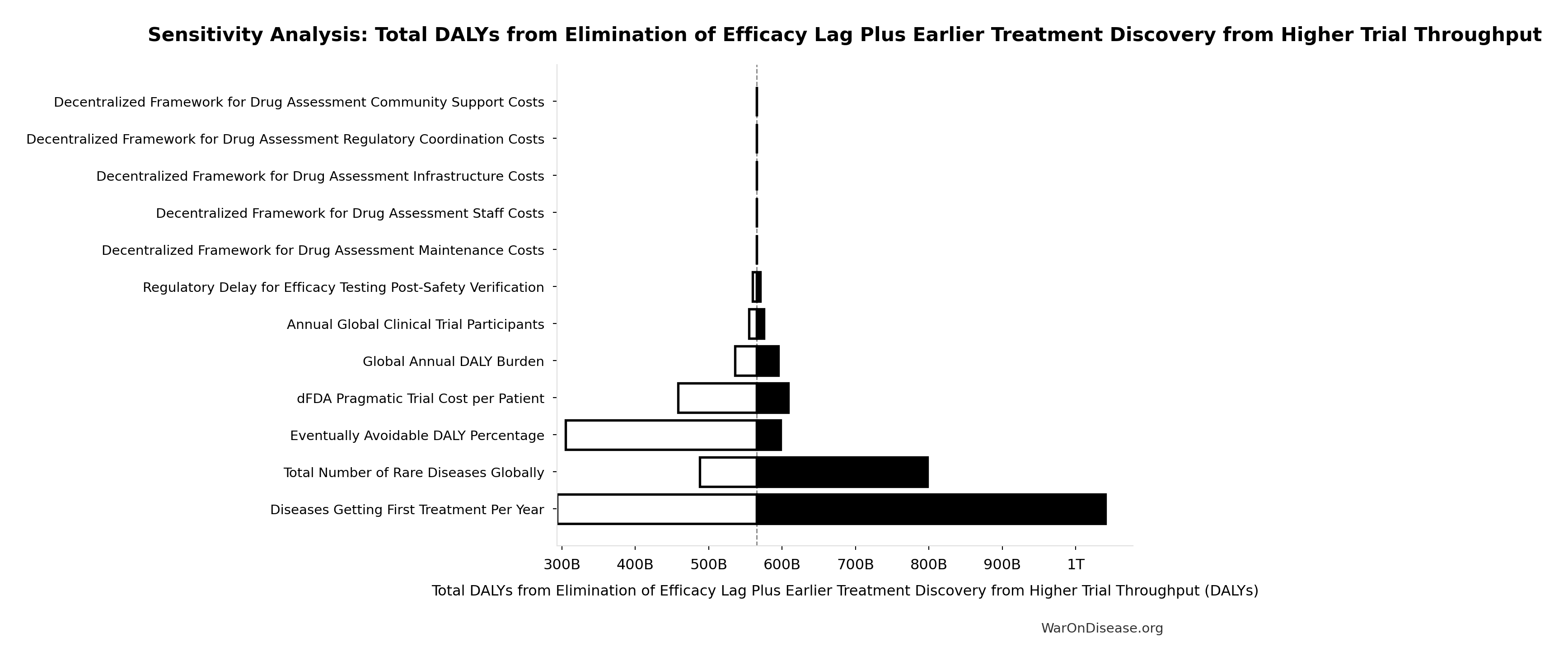

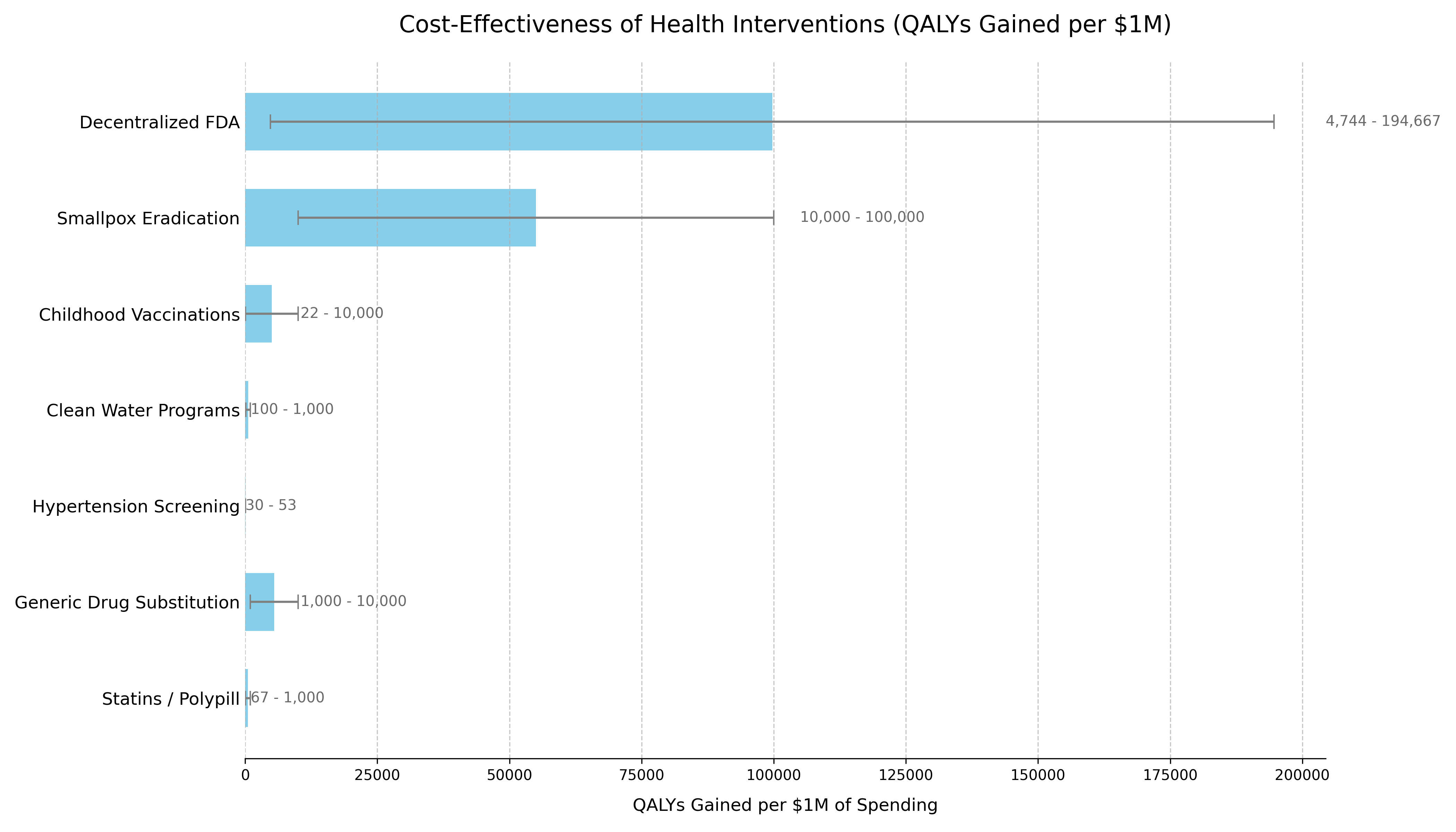

Of 9.5 million combinations (95% CI: 5.94 million combinations-13.9 million combinations) plausible drug-disease pairings, only 0.342% (95% CI: 0%-1%) have been clinically tested. At the current discovery rate of 15 diseases/year (95% CI: 8 diseases/year-30 diseases/year), clearing this backlog would take ~443 years (95% CI: 324 years-712 years). A decentralized FDA integrating pragmatic clinical trials into standard healthcare at $929 (95% CI: $97-$3,000)/patient (vs. $41,000 (95% CI: $20,000-$120,000) traditional) increases trial capacity 12.3x (95% CI: 4.2x-61.4x), reducing backlog clearance to 36 years (95% CI: 11.6 years-77.1 years). Combined with eliminating the 8.2 years (95% CI: 4.85 years-11.5 years) post-safety efficacy delay through opt-in trial participation after Phase I, treatments arrive 212 years (95% CI: 135 years-355 years) earlier on average. This timeline shift saves 10.7 billion deaths (95% CI: 7.4 billion deaths-16.2 billion deaths), averts 565 billion DALYs (95% CI: 361 billion DALYs-877 billion DALYs), and eliminates 1.93 quadrillion hours (95% CI: 1.36 quadrillion hours-2.62 quadrillion hours) of suffering (YLD portion of 565 billion DALYs (95% CI: 361 billion DALYs-877 billion DALYs) converted to hours) at $0.842 (95% CI: $0.242-$1.75)/DALY, competitive with bed nets ($89 (95% CI: $78-$100)/DALY) at vastly greater scale. Using standard health economic valuation ($150,000 (95% CI: $100,000-$199,282)/DALY, the US cost-effectiveness threshold; conservative relative to EPA/DOT Value of Statistical Life estimates), full impact yields $84.8 quadrillion (95% CI: $62.4 quadrillion-$97.3 quadrillion) in cumulative value (565 billion DALYs (95% CI: 361 billion DALYs-877 billion DALYs) cumulative DALYs over the 212 years (95% CI: 135 years-355 years) timeline shift, not annual; 178 thousand (95% CI: 110 thousand-421 thousand):1 ROI).

war-on-disease, 1-percent-treaty, medical-research, public-health, peace-dividend, decentralized-trials, dfda, dih, victory-bonds, health-economics, cost-benefit-analysis, clinical-trials, drug-development, regulatory-reform, military-spending, peace-economics, decentralized-governance, wishocracy, blockchain-governance, impact-investing

Executive Summary

The Problem: 6,650 diseases (95% CI: 5,700 diseases-8,242 diseases) have zero FDA-approved treatments. We have 9,500 compounds (95% CI: 7,000 compounds-12,000 compounds) proven-safe compounds (FDA-approved drugs + GRAS substances), yet only 0.342% (95% CI: 0%-1%) of 9.5 million combinations (95% CI: 5.94 million combinations-13.9 million combinations) plausible drug-disease combinations have been tested. At the current discovery rate of 15 diseases/year (95% CI: 8 diseases/year-30 diseases/year), clearing this backlog would take ~443 years (95% CI: 324 years-712 years). Of 2.4 billion people (95% CI: 2 billion people-2.8 billion people) with chronic disease, only 1.9 million patients/year (95% CI: 1.5 million patients/year-2.3 million patients/year) participate in trials annually (0.06%).

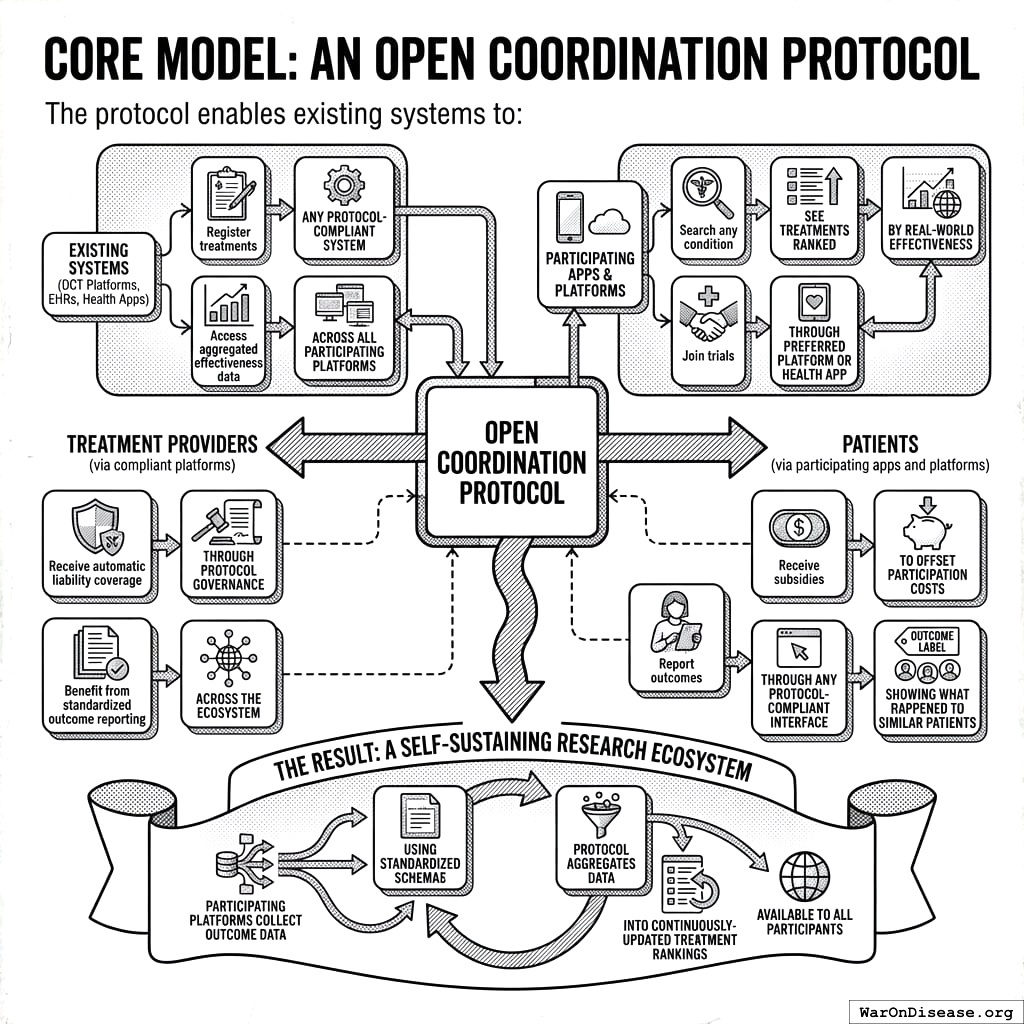

The Solution: A decentralized FDA148,149, an open protocol (like HTTP/FHIR, not a competing platform) that existing DCT platforms, EHRs, and health apps adopt to integrate pragmatic clinical trials into standard healthcare, enabling:

- Subsidized Patient Participation: Patients receive subsidies to participate in trials, making participation accessible and incentivized

- Universal Trial Access: Any patient can join trials from home via their phone or computer - no travel to research centers required

- Real-World Data Aggregation: Outcomes from all participants are aggregated into a unified database

- Treatment Rankings: Standardized effectiveness rankings for every treatment-condition pair, updated continuously with real-world evidence

- Outcome Labels: “Nutrition facts for drugs” showing exactly what happened to real patients who tried each treatment

The Receipts

| Metric | Value | Context |

|---|---|---|

| Cost-Effectiveness | $0.842 (95% CI: $0.242-$1.75)/DALY | Competitive with bed nets ($89 (95% CI: $78-$100)/DALY) at vastly greater scale |

| Lives Saved | 10.7 billion deaths (95% CI: 7.4 billion deaths-16.2 billion deaths) |

One-time benefit from 212 years (95% CI: 135 years-355 years) timeline shift |

| DALYs Averted | 565 billion DALYs (95% CI: 361 billion DALYs-877 billion DALYs) |

Captures both mortality and morbidity |

| Suffering Eliminated | 1.93 quadrillion hours (95% CI: 1.36 quadrillion hours-2.62 quadrillion hours) |

YLD portion of DALYs (39%) x 8,760 hrs/yr over timeline shift |

| Total Economic Value | $84.8 quadrillion (95% CI: $62.4 quadrillion-$97.3 quadrillion) |

Cumulative DALYs x $150K/DALY (WHO threshold) over 212 years (95% CI: 135 years-355 years) shift |

| Efficacy Lag Eliminated | Post-Phase I access via trial participation | |

| ROI (R&D Savings) | 637 (95% CI: 569-790):1 | 44.1x (95% CI: 39.4x-89.1x) cheaper trials |

| Annual R&D Savings | From 97.7% (95% CI: 97%-99%) cost reduction | |

| Trial Capacity Increase | Enabling parallel therapeutic space exploration |

Why These Numbers Are Large

The economic value figure ($84.8 quadrillion (95% CI: $62.4 quadrillion-$97.3 quadrillion)) exceeds global GDP ($115 trillion). This is expected, not an error. Three points of context:

1. Standard methodology, applied at scale. The $150,000 (95% CI: $100,000-$199,282)/DALY valuation is the US cost-effectiveness threshold (ICER). It is conservative relative to EPA and DOT Value of Statistical Life estimates, which imply higher per-DALY values when converted (~$300K-$600K/DALY). We did not invent this number. We multiplied it by the number of sick people.

2. GDP measures transactions, not the value of being alive. GDP does not count the value of not being dead, not being in pain, or not watching your children die of treatable diseases. Health economists have measured these values for decades. The global burden of disease (2.88 billion DALYs/year (95% CI: 2.63 billion DALYs/year-3.13 billion DALYs/year)) valued at $150,000 (95% CI: $100,000-$199,282)/DALY produces $400 trillion (95% CI: $240 trillion-$587 trillion)/year in health losses, roughly 3.5x global GDP. This is consistent with the established finding that the value of health substantially exceeds market output150,151.

3. The figure is cumulative over 212 years (95% CI: 135 years-355 years), not annual. This is the total value of permanently accelerating medical progress, the same methodology used to value smallpox eradication ($300M program -> millions of future lives saved) and climate infrastructure (multi-trillion dollar damage estimates that exceed annual GDP). The only debatable input is whether the timeline shift is really ~212 years; see The Discovery Capacity Model for that derivation.

Key Metric Derivations

Lives Saved:

\[

\begin{gathered}

Lives_{max} \\

= Deaths_{disease,daily} \times T_{accel,max} \times 338 \\

= 150{,}000 \times 212 \times 338 \\

= 10.7B

\end{gathered}

\]

where:

\[

T_{accel,max} = T_{accel} + T_{lag} = 204 + 8.2 = 212

\]

where:

\[

\begin{gathered}

T_{accel} \\

= T_{first,SQ} \times \left(1 - \frac{1}{k_{capacity}}\right) \\

= 222 \times \left(1 - \frac{1}{12.3}\right) \\

= 204

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{first,SQ} \\

= T_{queue,SQ} \times 0.5 \\

= 443 \times 0.5 \\

= 222

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,SQ} \\

= \frac{N_{untreated}}{Treatments_{new,ann}} \\

= \frac{6{,}650}{15} \\

= 443

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{untreated} \\

= N_{rare} \times 0.95 \\

= 7{,}000 \times 0.95 \\

= 6{,}650

\end{gathered}

\]

where:

\[

\begin{gathered}

k_{capacity} \\

= \frac{N_{fundable,dFDA}}{Slots_{curr}} \\

= \frac{23.4M}{1.9M} \\

= 12.3

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{fundable,dFDA} \\

= \frac{Subsidies_{dFDA,ann}}{Cost_{pragmatic,pt}} \\

= \frac{\$21.8B}{\$929} \\

= 23.4M

\end{gathered}

\]

where:

\[

\begin{gathered}

Subsidies_{dFDA,ann} \\

= Funding_{dFDA,ann} - OPEX_{dFDA} \\

= \$21.8B - \$40M \\

= \$21.8B

\end{gathered}

\]

where:

\[

\begin{gathered}

OPEX_{dFDA} \\

= Cost_{platform} + Cost_{staff} + Cost_{infra} \\

+ Cost_{regulatory} + Cost_{community} \\

= \$15M + \$10M + \$8M + \$5M + \$2M \\

= \$40M

\end{gathered}

\]

Suffering Hours Eliminated:

\[

\begin{gathered}

Hours_{suffer,max} \\

= DALYs_{max} \times Pct_{YLD} \times 8760 \\

= 565B \times 0.39 \times 8760 \\

= 1930T

\end{gathered}

\]

where:

\[

\begin{gathered}

DALYs_{max} \\

= DALYs_{global,ann} \times Pct_{avoid,DALY} \times T_{accel,max} \\

= 2.88B \times 92.6\% \times 212 \\

= 565B

\end{gathered}

\]

where:

\[

T_{accel,max} = T_{accel} + T_{lag} = 204 + 8.2 = 212

\]

where:

\[

\begin{gathered}

T_{accel} \\

= T_{first,SQ} \times \left(1 - \frac{1}{k_{capacity}}\right) \\

= 222 \times \left(1 - \frac{1}{12.3}\right) \\

= 204

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{first,SQ} \\

= T_{queue,SQ} \times 0.5 \\

= 443 \times 0.5 \\

= 222

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,SQ} \\

= \frac{N_{untreated}}{Treatments_{new,ann}} \\

= \frac{6{,}650}{15} \\

= 443

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{untreated} \\

= N_{rare} \times 0.95 \\

= 7{,}000 \times 0.95 \\

= 6{,}650

\end{gathered}

\]

where:

\[

\begin{gathered}

k_{capacity} \\

= \frac{N_{fundable,dFDA}}{Slots_{curr}} \\

= \frac{23.4M}{1.9M} \\

= 12.3

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{fundable,dFDA} \\

= \frac{Subsidies_{dFDA,ann}}{Cost_{pragmatic,pt}} \\

= \frac{\$21.8B}{\$929} \\

= 23.4M

\end{gathered}

\]

where:

\[

\begin{gathered}

Subsidies_{dFDA,ann} \\

= Funding_{dFDA,ann} - OPEX_{dFDA} \\

= \$21.8B - \$40M \\

= \$21.8B

\end{gathered}

\]

where:

\[

\begin{gathered}

OPEX_{dFDA} \\

= Cost_{platform} + Cost_{staff} + Cost_{infra} \\

+ Cost_{regulatory} + Cost_{community} \\

= \$15M + \$10M + \$8M + \$5M + \$2M \\

= \$40M

\end{gathered}

\]

Cost per DALY:

\[

\begin{gathered}

Cost_{direct,DALY} \\

= \frac{NPV_{direct}}{DALYs_{max}} \\

= \frac{\$476B}{565B} \\

= \$0.842

\end{gathered}

\]

where:

\[

\begin{gathered}

NPV_{direct} \\

= \frac{T_{queue,dFDA}}{Funding_{dFDA,ann} \times r_{discount}} \\

= \frac{36}{\$21.8B \times 3\%} \\

= \$476B

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,dFDA} \\

= \frac{T_{queue,SQ}}{k_{capacity}} \\

= \frac{443}{12.3} \\

= 36

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,SQ} \\

= \frac{N_{untreated}}{Treatments_{new,ann}} \\

= \frac{6{,}650}{15} \\

= 443

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{untreated} \\

= N_{rare} \times 0.95 \\

= 7{,}000 \times 0.95 \\

= 6{,}650

\end{gathered}

\]

where:

\[

\begin{gathered}

k_{capacity} \\

= \frac{N_{fundable,dFDA}}{Slots_{curr}} \\

= \frac{23.4M}{1.9M} \\

= 12.3

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{fundable,dFDA} \\

= \frac{Subsidies_{dFDA,ann}}{Cost_{pragmatic,pt}} \\

= \frac{\$21.8B}{\$929} \\

= 23.4M

\end{gathered}

\]

where:

\[

\begin{gathered}

Subsidies_{dFDA,ann} \\

= Funding_{dFDA,ann} - OPEX_{dFDA} \\

= \$21.8B - \$40M \\

= \$21.8B

\end{gathered}

\]

where:

\[

\begin{gathered}

OPEX_{dFDA} \\

= Cost_{platform} + Cost_{staff} + Cost_{infra} \\

+ Cost_{regulatory} + Cost_{community} \\

= \$15M + \$10M + \$8M + \$5M + \$2M \\

= \$40M

\end{gathered}

\]

where:

\[

\begin{gathered}

DALYs_{max} \\

= DALYs_{global,ann} \times Pct_{avoid,DALY} \times T_{accel,max} \\

= 2.88B \times 92.6\% \times 212 \\

= 565B

\end{gathered}

\]

where:

\[

T_{accel,max} = T_{accel} + T_{lag} = 204 + 8.2 = 212

\]

where:

\[

\begin{gathered}

T_{accel} \\

= T_{first,SQ} \times \left(1 - \frac{1}{k_{capacity}}\right) \\

= 222 \times \left(1 - \frac{1}{12.3}\right) \\

= 204

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{first,SQ} \\

= T_{queue,SQ} \times 0.5 \\

= 443 \times 0.5 \\

= 222

\end{gathered}

\]

Interpreting These Figures: Cumulative, Not Annual

These are cumulative benefits over the entire acceleration period, not annual figures. This is the same methodology used to value smallpox eradication (program cost -> total future lives saved) and climate infrastructure investments: the one-time benefit of permanently accelerating medical progress.

Interpreting the Timeline Figure: The 212 years (95% CI: 135 years-355 years) figure represents a discovery capacity model of medical research. Think of the therapeutic search space as the set of all untested drug-disease combinations, with trial capacity determining how fast we can explore it.

The Discovery Capacity Model

| Parameter | Status Quo | Proposed | Impact |

|---|---|---|---|

| Untreated diseases | Same backlog | ||

| Discovery rate (first treatments/year) | 185 diseases/year (95% CI: 107 diseases/year-491 diseases/year) |

12.3x (95% CI: 4.2x-61.4x) faster | |

| Time to explore search space | Centuries saved | ||

| Expected time to first treatment | ~443 years (95% CI: 324 years-712 years)/2 | ~36 years (95% CI: 11.6 years-77.1 years)/2 | 204 years (95% CI: 123 years-350 years) earlier |

Why treatments arriving sooner saves lives:

A disease that would receive its first effective treatment in year 200 under the status quo might receive it in year 16 with the framework. During those 184 years, people die from that disease who could have been saved. The 10.7 billion deaths (95% CI: 7.4 billion deaths-16.2 billion deaths) figure captures the cumulative lives saved across all diseases during their acceleration periods.

The two components:

- Discovery acceleration (204 years (95% CI: 123 years-350 years)): Higher discovery rate explores the therapeutic space faster, moving treatments forward

- Efficacy lag elimination (8.2 years (95% CI: 4.85 years-11.5 years)): Once discovered, treatments reach patients immediately instead of waiting for Phase II/III

Total timeline shift: 212 years (95% CI: 135 years-355 years) = 204 years (95% CI: 123 years-350 years) + 8.2 years (95% CI: 4.85 years-11.5 years)

How the 12.3x (95% CI: 4.2x-61.4x) capacity increase works: With $21.8 billion/year in trial funding at $929 (95% CI: $97-$3,000)/patient (based on ADAPTABLE trial), the framework enables 23.4 million patients/year (95% CI: 9.46 million patients/year-97 million patients/year) annual trial participants vs. current 1.9 million patients/year (95% CI: 1.5 million patients/year-2.3 million patients/year), increasing trial completion rate from 15 diseases/year (95% CI: 8 diseases/year-30 diseases/year) to 185 diseases/year (95% CI: 107 diseases/year-491 diseases/year). This removes the primary bottleneck to medical progress: currently less than 0.06% of willing patients can access trials, and over 9,500 compounds (95% CI: 7,000 compounds-12,000 compounds) proven-safe (FDA-approved drugs + GRAS substances) remain untested for most conditions they could improve.

Capabilities

Core Model: An Open Coordination Protocol

The protocol enables existing systems (DCT platforms, EHRs, health apps) to:

For Treatment Providers (via compliant platforms):

- Register treatments through any protocol-compliant system

- Access aggregated effectiveness data across all participating platforms

- Receive automatic liability coverage through protocol governance

- Benefit from standardized outcome reporting across the ecosystem

For Patients (via participating apps and platforms):

- Search any condition, see treatments ranked by real-world effectiveness

- Join trials through their preferred platform or health app

- Receive subsidies to offset participation costs

- Report outcomes through any protocol-compliant interface

- Access “Outcome Labels” showing what happened to similar patients

The Result: A self-sustaining research ecosystem where participating platforms collect outcome data using standardized schemas, and the protocol aggregates this into continuously-updated treatment rankings available to all participants.

Key Capabilities

- Treatment Rankings: Every treatment for every condition ranked by real-world effectiveness, updated continuously as new data arrives

- Outcome Labels: Standardized “nutrition facts for drugs” showing effectiveness rates, side effects, and outcomes from real patients

- Universal Trial Access: Any patient can participate from anywhere via phone/computer

- Real-Time Surveillance: Continuous data on efficacy, side effects, and drug interactions

- Federated Data Architecture: Data stays in source systems (EHR platforms, consumer health apps) while queries run across all sources

Potential Impact on the Status Quo

- Speed of Trials: Reduced overhead and automated data capture compresses timelines.

- Cost of Trials: Using existing healthcare encounters, telemedicine, and EHR data to drastically cut per-patient costs (modeled on pragmatic trials like Oxford RECOVERY and the US-based ADAPTABLE trial).

- Scale & Scope: Enables testing many more drugs, off-label indications, unpatentable treatments, nutraceuticals, and personalized medicine approaches.

- Innovation Incentives: Lower R&D costs increase profitability and encourage more entrants/innovation in the life sciences.

Addressing Key Concerns

Historical Validation: Pre-1962 Physician-Led Efficacy Testing

The decentralized physician-led efficacy model is not theoretical. It operated successfully from 1883 to 1960, providing 77 years of empirical validation.

How the pre-1962 system worked:

From 1883 to 1960, 144 thousand physicians across America tested drug efficacy on real patients in routine clinical practice. The Journal of the American Medical Association (JAMA) compiled observational reports, leading medical experts peer-reviewed the aggregated data, and effective treatments received endorsement. This decentralized approach successfully identified antibiotics, vaccines, and countless surgical techniques.

Cost comparison demonstrates dramatic efficiency:

| Era | Cost per Drug (2024 USD) | System |

|---|---|---|

| Pre-1962 | Decentralized physician-led efficacy testing | |

| Post-1962 | Centralized pharmaceutical company trials | |

| Cost Increase | Regulatory mandate, not drug complexity |

The cost explosion began exactly when efficacy testing was centralized within pharmaceutical companies. This wasn’t a natural evolution of drug development or increasing drug complexity. The same types of compounds (small molecules, biologics) that cost $24.7 million (95% CI: $19.5 million-$30 million) to develop in 1960 now cost $2.6 billion (95% CI: $1.5 billion-$4 billion).

The thalidomide success story: Thalidomide is often cited as justification for the 1962 amendments, but the US already blocked thalidomide under existing 1938 safety regulations. The FDA’s Frances Kelsey refused approval based on inadequate safety data, not efficacy requirements. The 1962 amendments added efficacy proof requirements, not additional safety testing.

Implications: This model returns to decentralized physician-led efficacy testing but with modern automation (electronic health records, AI-assisted analysis, real-time data aggregation), targeting the same 50-95% cost reductions that the pre-1962 system achieved.

Why “Eventually Avoidable” Matters

A critical assumption in this analysis is that 92.6% (95% CI: 50%-98%) of disease deaths are “eventually avoidable” - meaning they could be prevented with sufficient biomedical research over time.

Why this assumption is conservative:

Historical trend: In 1900, life expectancy was ~47 years. Today it’s ~79. Most of that gain came from preventing deaths that were once considered inevitable (infectious disease, childhood mortality, cardiovascular disease).

Known mechanisms exist: For most major disease categories, we understand enough biology to know that interventions are theoretically possible. Cancer is caused by specific mutations. Heart disease has identifiable risk factors. The question is finding the right treatments, not whether treatments can exist.

Already-discovered treatments prove the space: 30% of approved drugs gain new indications, demonstrating that effective treatments exist but haven’t been found yet.

What if this assumption is wrong?

Even if only 25% of deaths are eventually avoidable (half our estimate), the framework still generates 637 (95% CI: 569-790):1 ROI from R&D savings alone, independent of health benefits. These deaths will eventually be preventable with sufficient research progress. The health impact figures scale linearly with avoidability assumptions, but the cost-saving case doesn’t depend on them.

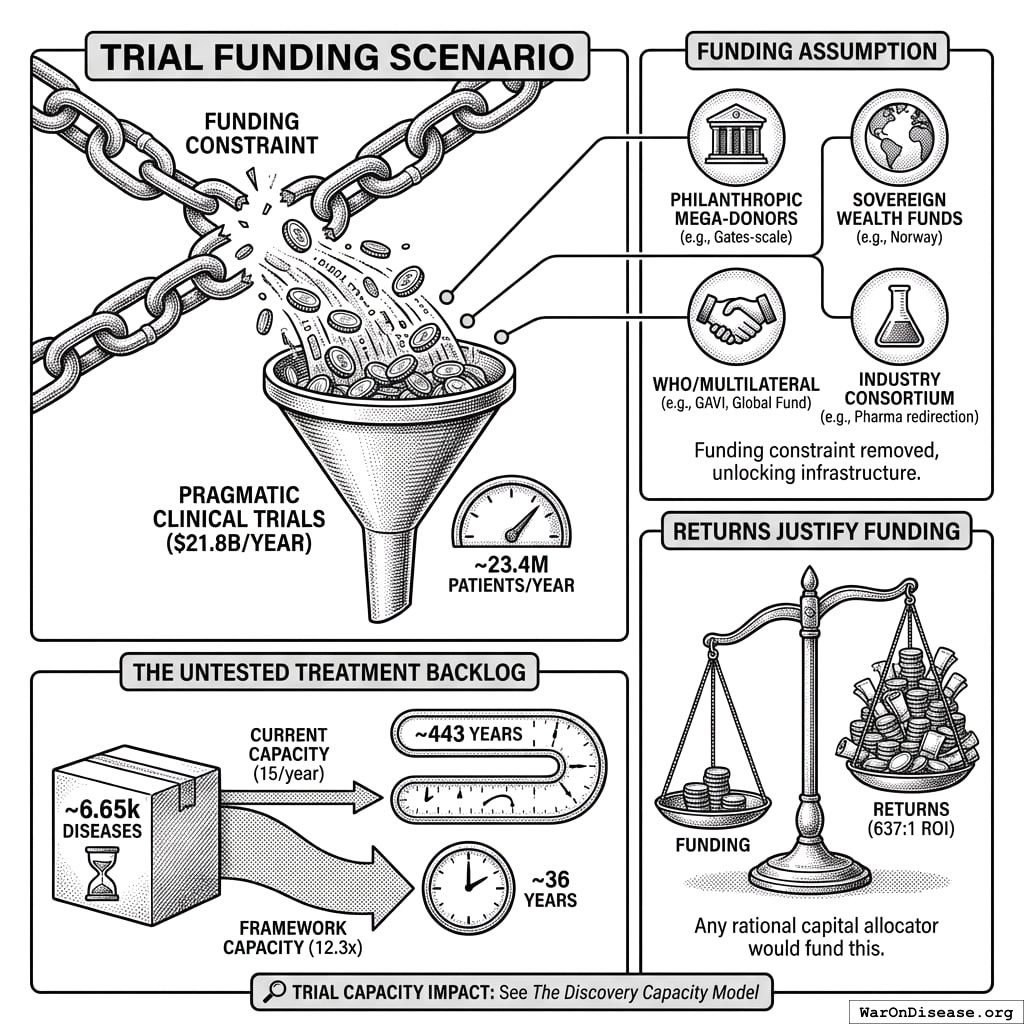

Trial Funding Scenario

This analysis models a scenario with $21.8 billion/year allocated to pragmatic clinical trials. At $929 (95% CI: $97-$3,000)/patient, this funds approximately 23.4 million patients/year (95% CI: 9.46 million patients/year-97 million patients/year) patient-years annually.

On the Funding Assumption

This analysis demonstrates what becomes possible when the funding constraint is removed. The $21.8 billion/year figure is achievable through multiple mechanisms:

- Philanthropic mega-donors: A single Gates Foundation-scale commitment could fund the protocol infrastructure and initial years

- Sovereign wealth funds: Norway’s $1.4T fund or similar could view this as humanity-scale infrastructure

- WHO/multilateral coordination: Comparable to GAVI or the Global Fund

- Military reallocation: Less than 1% of global military spending ($2.72 trillion/year)

- Industry consortium: Pharma collectively spends $60 billion (95% CI: $50 billion-$75 billion)/year on trials; even 10% redirection exceeds this threshold

The returns justify the funding, not vice versa. At 637 (95% CI: 569-790):1 ROI, any rational capital allocator would fund this if they believed the analysis.

Trial Capacity Impact: See The Discovery Capacity Model for the full comparison of status quo vs. framework trial capacity metrics.

The Untested Treatment Backlog:

Approximately 6,650 diseases (95% CI: 5,700 diseases-8,242 diseases) have zero FDA-approved treatments. At current trial capacity (15 diseases/year (95% CI: 8 diseases/year-30 diseases/year)), systematically testing all 9.5 million combinations (95% CI: 5.94 million combinations-13.9 million combinations) plausible pairings would take ~443 years (95% CI: 324 years-712 years). With 12.3x (95% CI: 4.2x-61.4x) capacity, this drops to ~36 years (95% CI: 11.6 years-77.1 years).

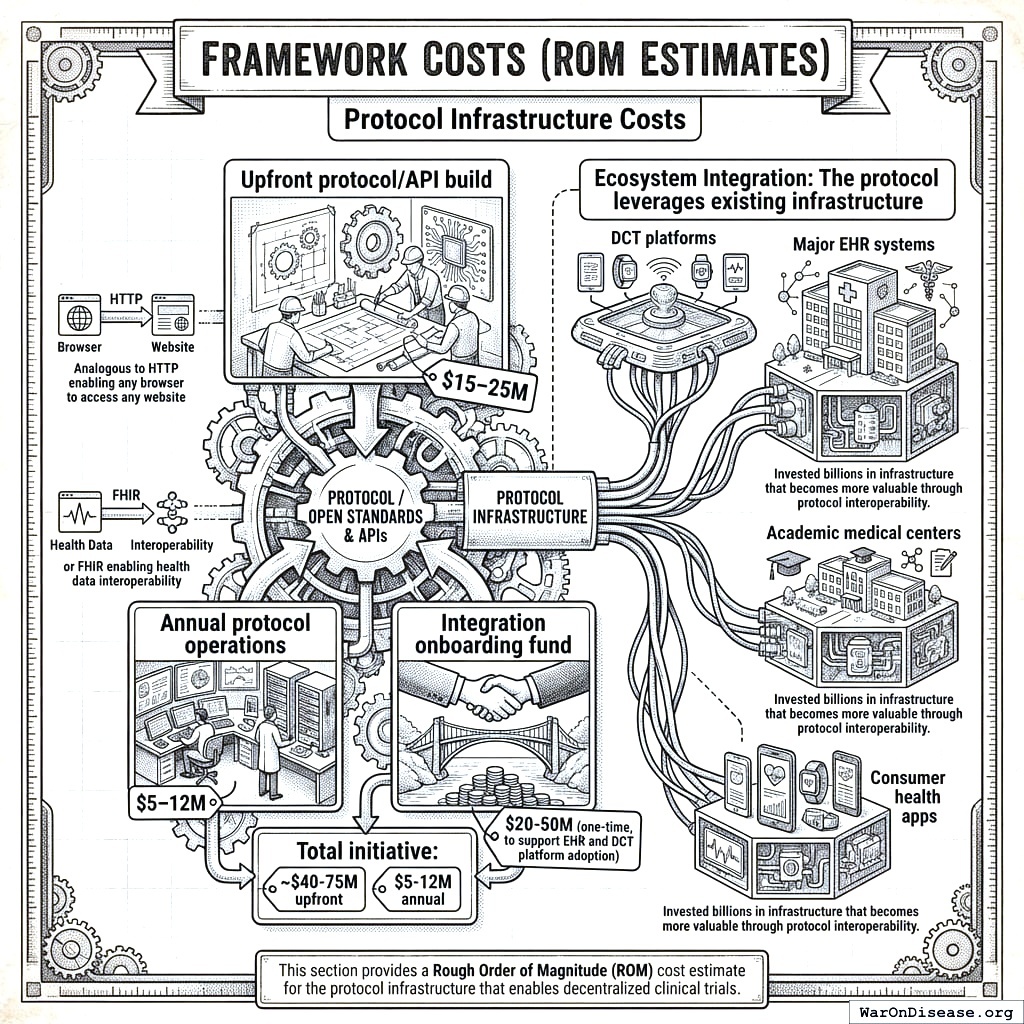

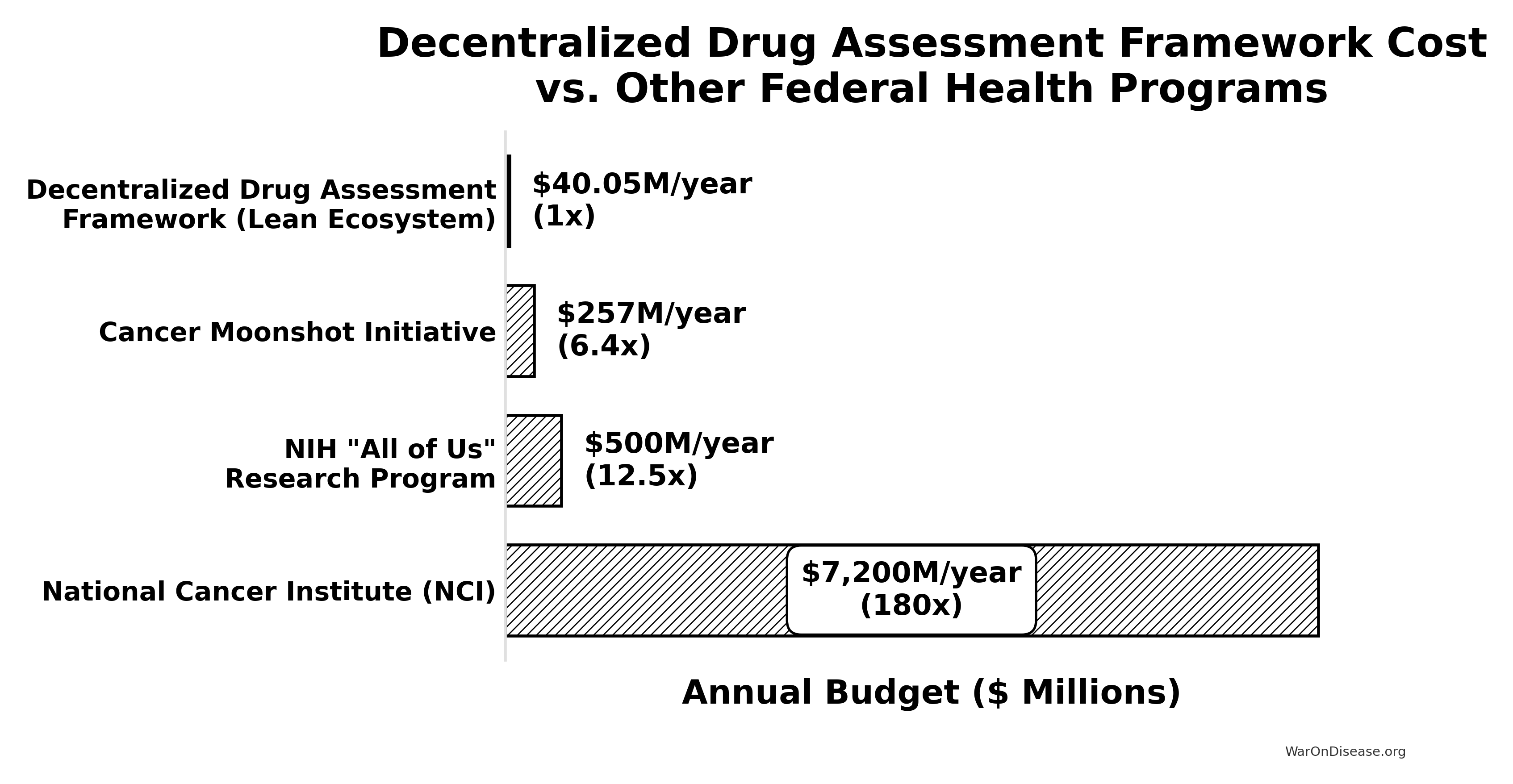

Framework Costs (ROM Estimates)

Protocol Infrastructure Costs

This is protocol infrastructure, open standards and APIs that existing clinical trial systems adopt. This is analogous to HTTP enabling any browser to access any website, or FHIR enabling health data interoperability.

- Upfront protocol/API build: $15–25M

- Annual protocol operations: $5–12M

- Integration onboarding fund: $20-50M (one-time, to support EHR and DCT platform adoption)

- Total initiative: ~$40-75M upfront, $5-12M annual

Ecosystem Integration: The protocol leverages existing infrastructure - DCT platforms, major EHR systems, academic medical centers, and consumer health apps. These organizations have invested billions in infrastructure that becomes more valuable through protocol interoperability.

This section provides a Rough Order of Magnitude (ROM) cost estimate for the protocol infrastructure that enables decentralized clinical trials.

Upfront Build Costs (30 Months)

Core Engineering & Development Effort:

- Basis: ~75 FTEs 2.5 years $200k/FTE/year

- Activities: Detailed design, Core framework development (API, storage, mapping/validation, auth), reference frontend, initial plugin interfaces, testing, documentation, initial deployment.

The engineering cost is calculated as:

\[ C_{\text{engineering}} = N_{\text{FTEs}} \times T \times C_{\text{FTE}} = 75 \times 2.5 \times \$200\text{k} = \$37.5\text{M} \]

Where \(N_{\text{FTEs}} = 75\) is the number of full-time equivalents, \(T = 2.5\) years is the development timeline, and \(C_{\text{FTE}} = \$200\text{k}\) per FTE per year.

- Estimated ROM: $35 - $40 million

Infrastructure Setup & Initial Cloud Costs:

- Activities: Establishing cloud accounts, VPCs, Kubernetes cluster (EKS) setup, database provisioning (RDS/TimescaleDB), S3 buckets, CI/CD pipeline setup, initial IaC development (Terraform).

- Costs: Includes initial compute/storage during development/testing, potential small upfront reservations.

- Estimated ROM: $1 - $3 Million

Software Licenses & Tooling (Initial):

- Examples: Potential costs for monitoring tools (Datadog), security scanners (Snyk), specialized libraries, collaboration tools if not already covered.

- Estimated ROM: $0.5 - $1 Million

Compliance, Legal & Security (Initial Setup):

- Activities: Initial HIPAA/GDPR compliance assessment, policy development, security architecture review, legal consultation for data sharing frameworks.

- Estimated ROM: $1 - $2 Million

The total upfront cost is the sum of all components:

\[ C_0 = C_{\text{engineering}} + C_{\text{infrastructure}} + C_{\text{software}} + C_{\text{compliance}} \]

Where:

- \(C_{\text{engineering}} = \$35 - \$40\) million (Core Engineering & Development)

- \(C_{\text{infrastructure}} = \$1 - \$3\) million (Infrastructure Setup & Initial Cloud Costs)

- \(C_{\text{software}} = \$0.5 - \$1\) million (Software Licenses & Tooling)

- \(C_{\text{compliance}} = \$1 - \$2\) million (Compliance, Legal & Security)

Total Estimated Upfront Cost (ROM): $37.5 - $46 million

Note: This ROM estimate focuses only on the Core framework build effort and associated setup. It represents the foundational first step. A full global implementation requires significant additional investment in broader initiatives to achieve goals of global integration, legal harmonization, and massive scale. These crucial, follow-on costs are estimated separately in the Scenario Based ROM Estimates for Broader Initiative Costs section below and include:

- Global EHR/Data Source Integration Effort: Building/buying connectors for thousands of systems worldwide.

- Large-Scale Plugin Development: Funding the ecosystem of data importers, analysis tools, and visualization plugins.

- International Legal/Regulatory Harmonization: Major diplomatic and legal efforts to create a global standard.

- Global Rollout & Adoption: Costs associated with driving adoption and providing training worldwide.

- Massive-Scale Infrastructure: Scaling hardware and cloud resources beyond initial targets to support millions of users.

The following sections provide ROM estimates for both the ongoing operational costs of the Core framework and for these essential broader initiatives.

Ecosystem Participants

The protocol creates value by enabling interoperability among existing clinical trial infrastructure. These organizations represent the ecosystem that would adopt and benefit from the protocol:

| Participant | Current Investment | How They Integrate | What They Gain |

|---|---|---|---|

| DCT Platforms | $1B+ collectively in VC funding | Adopt outcome reporting standards | Interoperability, larger patient pools, regulatory credibility |

| Major EHR Systems | Billions in infrastructure | Enable federated queries | New revenue from research queries, competitive differentiation |

| Pharma Sponsors | $60 billion (95% CI: $50 billion-$75 billion)/year on trials | Submit trials via compliant systems | Lower costs, faster enrollment, real-world evidence |

| Academic Medical Centers | Research infrastructure | Contribute federated data nodes | Research funding, publication opportunities |

| Consumer Health Apps | Consumer health data | Report patient outcomes to protocol | User engagement, clinical validation |

Why This Matters

DCT platforms (collectively raising over $1B in venture funding) and major EHR systems have already built the infrastructure for patient recruitment, data collection, and trial management. The protocol doesn’t replicate this work - it makes their existing investments more valuable by enabling data to flow across systems.

Annual Operational Costs (5M MAU Target Scale)

Cloud Infrastructure Costs (AWS):

- Components: EKS cluster, RDS/TimescaleDB hosting, S3 storage & requests, SQS messaging, API Gateway usage, Data Transfer (egress), CloudWatch logging/monitoring.

- Basis: Highly dependent on actual usage patterns, data retrieval frequency, processing intensity. Assumes optimized resource usage.

- Estimated ROM: $5 - $15 Million / year (Very sensitive to scale and usage patterns)

Ongoing Engineering, Maintenance & Operations:

- Team Size: Assume ~20 FTEs (SREs, DevOps, Core Maintainers, Security).

- Basis: 20 FTEs * $200k/FTE/year

The ongoing engineering cost is calculated as:

\[ C_{\text{engineering}}^{\text{ops}} = N_{\text{FTEs}}^{\text{ops}} \times C_{\text{FTE}} = 20 \times \$200\text{k} = \$4\text{M}/\text{year} \]

Where \(N_{\text{FTEs}}^{\text{ops}} = 20\) is the number of FTEs for ongoing operations.

- Estimated ROM: $4 - $6 Million / year

Software Licenses & Tooling (Ongoing):

- Examples: Monitoring (Datadog/New Relic), Error Tracking (Sentry), Security Tools, potential DB license/support costs at scale.

- Estimated ROM: $0.5 - $1.5 Million / year

Compliance & Auditing (Ongoing):

- Activities: Regular security audits (penetration tests, compliance checks), maintaining certifications, legal reviews.

- Estimated ROM: $0.5 - $1 Million / year

Support (User & Developer):

- Activities: Tier 1/2 support for protocol participants and third-party plugin developers.

- Estimated ROM: $1 - $3 Million / year (Scales with user base)

The total annual operational cost is the sum of all components:

\[ C_{\text{op}} = C_{\text{cloud}} + C_{\text{engineering}} + C_{\text{software}} + C_{\text{compliance}} + C_{\text{support}} \]

Where:

- \(C_{\text{cloud}} = \$5 - \$15\) million/year (Cloud Infrastructure Costs)

- \(C_{\text{engineering}} = \$4 - \$6\) million/year (Ongoing Engineering, Maintenance & Operations)

- \(C_{\text{software}} = \$0.5 - \$1.5\) million/year (Software Licenses & Tooling)

- \(C_{\text{compliance}} = \$0.5 - \$1\) million/year (Compliance & Auditing)

- \(C_{\text{support}} = \$1 - \$3\) million/year (Support)

Total Estimated Annual Operations (Platform Only, ROM): $11 - $26.5 Million / year

Marginal Cost Analysis per User

The 5M MAU target is an illustrative milestone used for these initial ROM estimates, not the ultimate goal for the framework, which is to support hundreds of millions or billions of users. At this initial scale, you can analyze the cost on a per-user basis.

- Average Cost Range Per User (at 5M MAU):

- Based on the total annual operational cost range of $11M - $26.5M, the average cost per user is: \[ \frac{\$11,000,000 \text{ to } \$26,500,000}{5{,}000{,}000 \text{ users}} = \mathbf{\$2.20 \text{ to } \$5.30 \text{ per user per year}} \]

- Marginal Cost Per Additional User:

- As federated data infrastructure, the framework has high fixed costs (protocol development, core engineering) but very low variable costs. Therefore, the marginal cost of supporting one additional participant is expected to be a small fraction of the average cost, likely pennies per year. This cost will decrease further as the protocol achieves greater economies of scale, making it exceptionally efficient at supporting a global participant base.

(Note: The underlying cloud infrastructure cost ($5M-$15M/year) is a top-down ROM estimate. A more granular, bottom-up analysis based on projected per-user storage, data transfer, and compute would provide further support for these figures and is a key area for future refinement of this model.)

Note on Participant Financial Contributions:

This cost estimate covers building the protocol infrastructure, not paying patients for trial participation. Trial participation costs would be handled separately through funding mechanisms (government grants, foundation funding, or sponsor payments). The protocol coordinates information exchange but doesn’t move money around directly.

This estimate excludes costs for governance structure and plugin development.

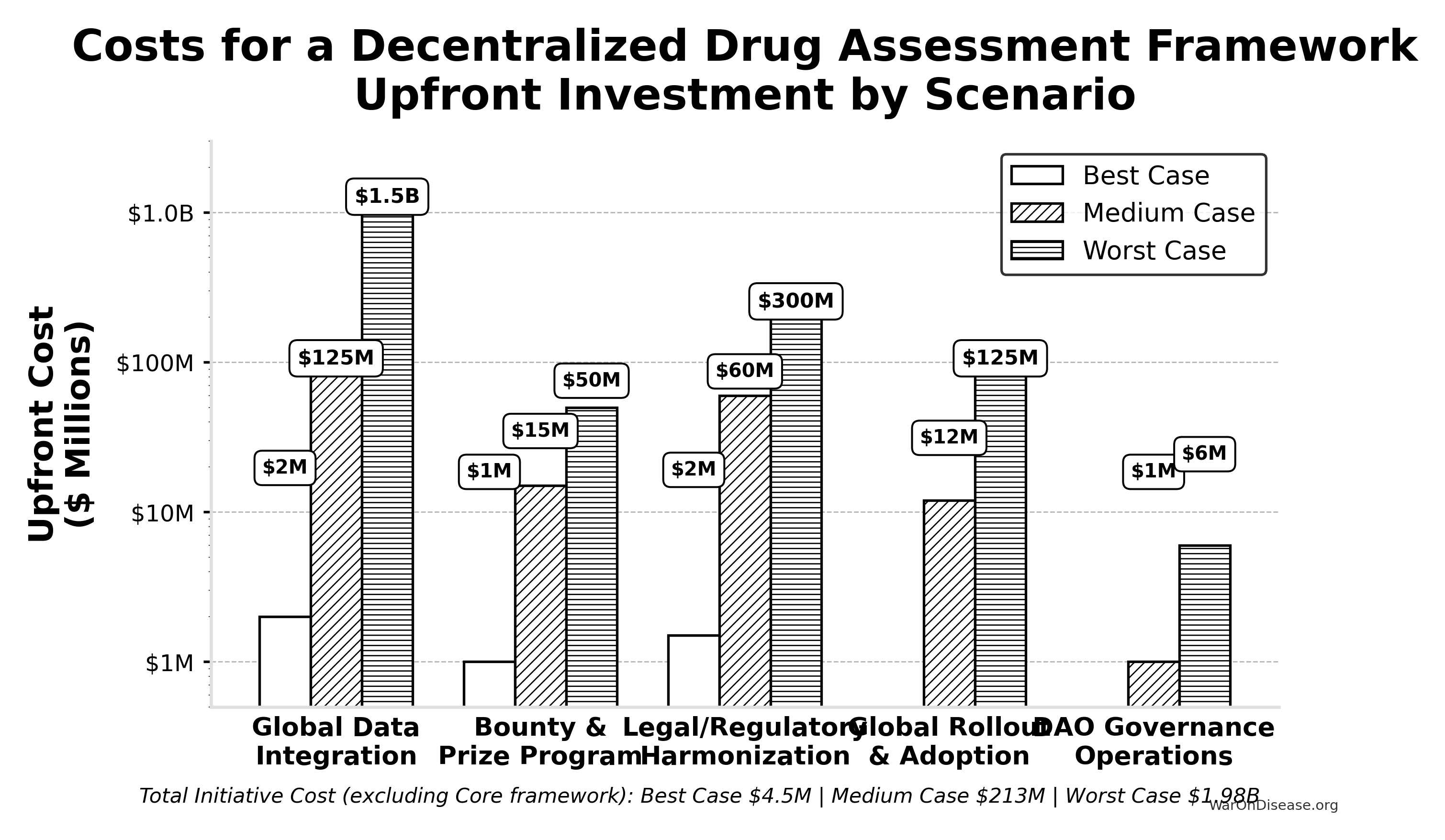

Scenario Based ROM Estimates for Broader Initiative Costs

This table presents point estimates for each scenario, with the overall range of possibilities captured by comparing the Best, Medium, and Worst Case columns.

| Component | Best Case (Upfront / Annual) | Medium Case (Upfront / Annual) | Worst Case (Upfront / Annual) | Key Assumptions & Variables Driving Range |

|---|---|---|---|---|

| Global Data Integration | $2M / ~$0 | $125M / $10M | $1.5B / $150M | Success of AI/automation, standards adoption, #systems, vendor cooperation. |

| Bounty & Prize Program | $1M (Prizes) / ~$0 | $15M (Bounties) / $2M | $50M (Major Bounties) / $10M | Success of organic ecosystem growth vs. need to incentivize critical plugin/tool development via bounties. |

| Legal/Regulatory Harmonization | $1.5M / ~$0 | $60M / $3M | $300M / $30M | Effectiveness of AI legal tools, political will, complexity of global law. |

| Global Rollout & Adoption | ~$0 / ~$0 | $12M / $3M | $125M / $30M | Need for training/support beyond protocol adoption, user interface complexity. |

| Governance Operations | ~$0 / ~$0 | ~$1M / $0.3M | ~$6M / $1M | Automation level, need for audits, grants, core support staff. |

| — TOTAL — | ~$4.5M / ~$0 | ~$213M / ~$18.3M | ~$1.98B+ / ~$221M+ | Represents total initiative cost excluding Core framework build/ops. |

Interpretation

Even when pursuing efficient strategies, the potential cost for the full initiative for a decentralized framework (beyond the Core framework) varies dramatically based on real-world execution challenges. The Medium Case suggests upfront costs in the low hundreds of millions and annual costs in the low tens of millions, while the Worst Case pushes towards multi-billion dollar upfront figures and annual costs in the hundreds of millions, dominated by integration, plugin funding, and legal costs if automation and community efforts fall short.

Summary

Based on the detailed technical specification, a ROM estimate suggests:

- Initial Core framework Build (~2.5 years): ~$37.5 - $46 million

- Annual Core framework Operations (at ~5M MAU scale): ~$11 - $26.5 Million (These framework operational costs are distinct from the financial flows of patient contributions and the NIH Trial Participation Cost Discount Fund, and also exclude plugin ecosystem costs not covered by protocol bounties)

The core protocol infrastructure build costs tens of millions; the broader global initiative (integration, legal frameworks, rollout) accounts for the larger cost estimates detailed in the scenario table above.

Benefit Analysis - Quantifying the Savings

This section quantifies the potential societal benefits of a decentralized FDA, focusing primarily on R&D cost savings and health outcome improvements.

Market Size and Impact

The global pharmaceutical and medical device R&D market is vast. Annual global spending on clinical trials is approximately $60 billion (95% CI: $50 billion-$75 billion). Much of this spending is made dramatically more efficient through protocol standardization. If such a framework enables even a fraction of these trials to use pragmatic designs, the economic impact will be substantial.

- Current Average Costs: Estimates suggest $2.6 billion (95% CI: $1.5 billion-$4 billion) to bring a new drug from discovery through FDA approval, spread across ~10 years.

- Clinical Trial Phase Breakdown:

- Phase I: $2 - $5 million/trial (smaller scale).

- Phase II: $10 - $50 million/trial (depending on disease area).

- Phase III: $100M - $500M/trial (large patient populations).

- Per-Patient Phase III Costs: Often $41,000 (95% CI: $20,000-$120,000) (site fees, overhead, staff, monitoring, data management).

Decentralized Trial Costs Modeled on Pragmatic Trials

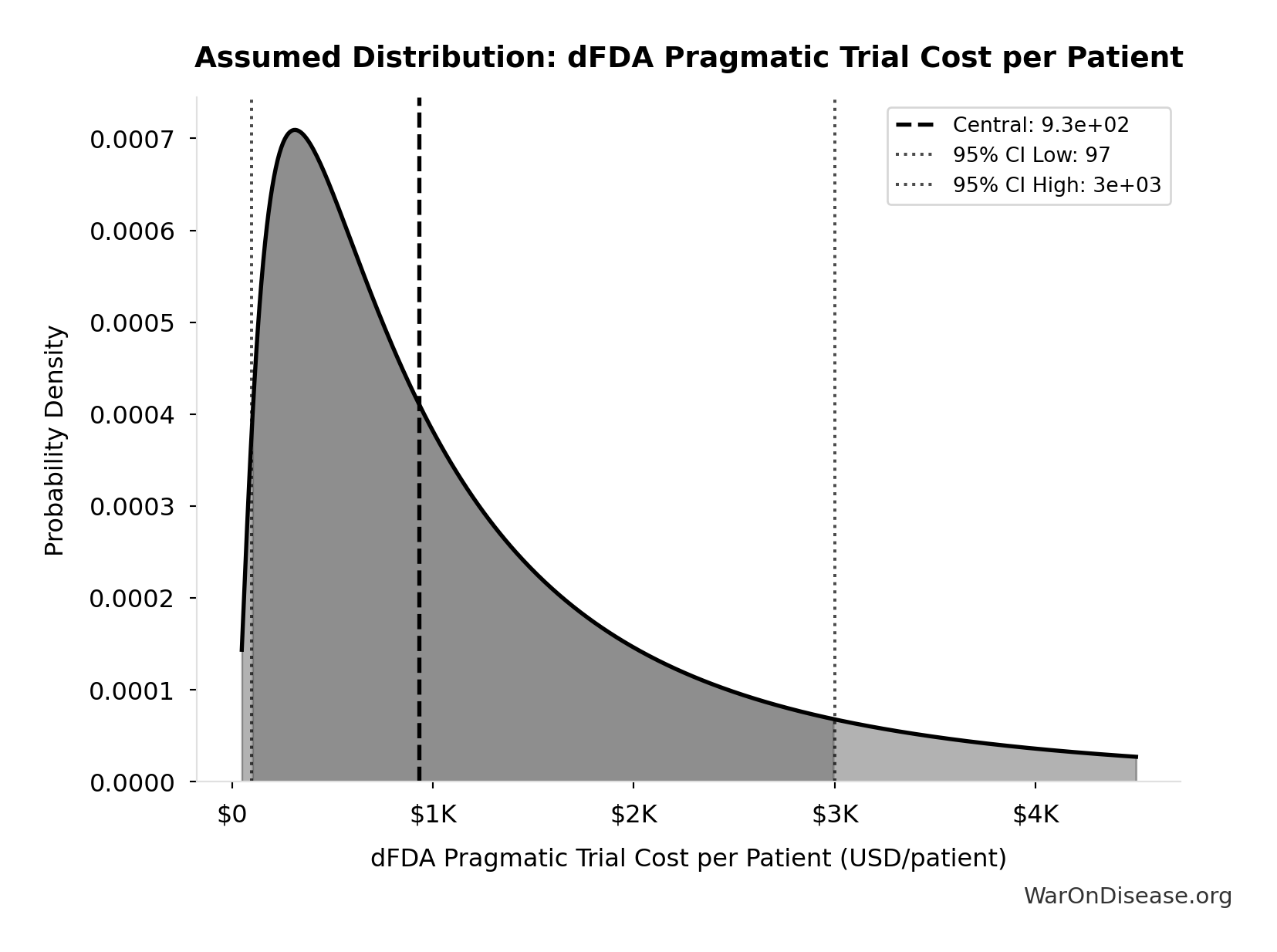

Oxford RECOVERY: Achieved ~$500 (95% CI: $400-$2,500). Key strategies included:

- Embedding trial protocols within routine hospital care.

- Minimizing overhead by leveraging existing staff/resources and electronic data capture.

- Focused, pragmatic trial designs.

Systematic Review Evidence: A systematic review of 64 embedded pragmatic clinical trials found a median cost per patient of $97 (95% CI: $19-$478)90. This confirms that low-cost execution is a replicable property of the pragmatic design, not an anomaly of any single trial.

ADAPTABLE Trial (PCORnet): The US-based ADAPTABLE trial152 ($14 million (95% CI: $14 million-$20 million) / 15,076 patients = $929 (95% CI: $929-$1,400)/patient) provides a more representative benchmark for pragmatic trial costs in typical healthcare settings without emergency conditions.

Framework Cost Projection: Our projections use $929 (95% CI: $97-$3,000)/patient based on ADAPTABLE. Confidence interval ($500-$3,000) captures range from RECOVERY-like efficiency to complex chronic disease trials.

Input: Pragmatic Trial Cost Distribution

This chart shows the assumed probability distribution for this parameter. The shaded region represents the 95% confidence interval where we expect the true value to fall.

Extrapolation to New System:

- A well-integrated global framework could achieve $929 (95% CI: $97-$3,000) in many cases, especially for pragmatic or observational designs.

- Up to ~44.1x (95% CI: 39.4x-89.1x) cost reduction is achievable by comparing pragmatic trial costs ($929 (95% CI: $97-$3,000)) against traditional costs of $41,000 (95% CI: $20,000-$120,000).

The cost reduction factor:

\[ \begin{gathered} k_{reduce} \\ = \frac{Cost_{P3,pt}}{Cost_{pragmatic,pt}} \\ = \frac{\$41K}{\$929} \\ = 44.1 \end{gathered} \]

The percentage reduction:

\[ \begin{gathered} Reduce_{pct} \\ = 1 - \frac{Cost_{pragmatic,pt}}{Cost_{P3,pt}} \\ = 1 - \frac{\$929}{\$41K} \\ = 97.7\% \end{gathered} \]

Scope of Cost Reduction

This reduction applies to trials amenable to pragmatic design - approximately 70% of Phase III trial volume by patient count (chronic disease management, comparative effectiveness, dose optimization). First-in-human studies, novel mechanism trials, and high-risk interventions retain traditional controlled protocols. The confidence interval ($500-$3,000/patient) captures this heterogeneity: simple comparative studies approach RECOVERY-level efficiency while complex trials remain closer to traditional costs. The headline 97.7% (95% CI: 97%-99%) figure represents the weighted average across the addressable trial market, not a claim that every trial achieves this reduction.

Overall Savings

By Reducing Per-Patient Costs

- If a trial with 5,000 participants costs $929 (95% CI: $97-$3,000)/patient, total cost is ~$6 million, versus $200 - $600 million under traditional models.

- This magnitude of savings can drastically reduce the total cost of clinical development.

For a trial with \(x\) participants, the total cost savings is:

\[ S_{\text{trial}}(x) = (c_t - c_d) \cdot x \]

Where:

- \(c_t\) is the traditional cost per patient ($41,000 (95% CI: $20,000-$120,000))

- \(c_d\) is the decentralized cost per patient ($929 (95% CI: $97-$3,000))

For a trial with \(x = 5,000\) participants, savings are approximately:

\[(\text{Traditional} - \text{Pragmatic}) \times 5{,}000 \approx \$194\text{M per trial}\]

Volume of Trials & Speed

- Faster, cheaper trials allow more drug candidates, off-label uses, nutraceuticals, and personalized dosing strategies to be tested.

- Shorter development cycles reduce carrying costs and risk, further increasing ROI for sponsors.

Regulatory Savings

- A unified protocol standard with automated data audits cuts bureaucratic duplication across multiple countries, drastically lowering compliance costs.

Transparent, Standardized Environment

- The transparent nature of the protocol creates a standardized operating environment. Outcome data is visible across the ecosystem, enabling sponsors to benchmark their trial designs and optimize operational costs, further driving down R&D expenditure beyond the technical efficiencies of pragmatic trials.

Economic Value of Earlier Access to Treatments

- Faster approvals and access to effective treatments can save lives and improve quality of life.

- Value of a Statistical Life (VSL): U.S. agencies use ~$10 million (95% CI: $5 million-$15 million) per life saved.

- QALY Framework: Standard willingness-to-pay is $100,000–$150,000 (95% CI: $100,000-$199,282) per QALY gained.

- These benefits are additive to direct cost savings and can be substantial depending on the scale of acceleration.

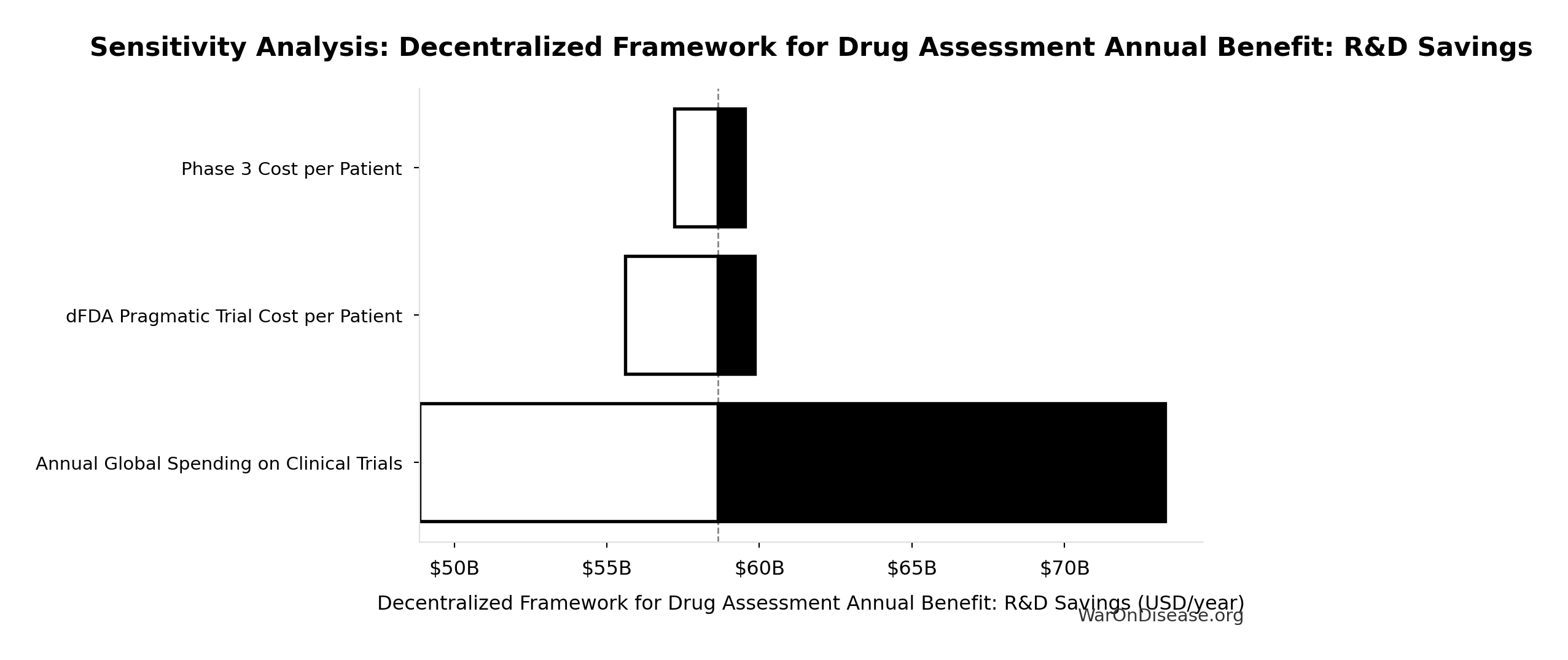

Gross R&D Savings from a Decentralized FDA

- Parameter: Percentage reduction in addressable clinical trial costs due to a decentralized FDA.

- Central Estimate: 97.7% (95% CI: 97%-99%) (44.1x (95% CI: 39.4x-89.1x))

- Source/Rationale:

- Decentralized Clinical Trials (DCTs) demonstrate significant cost reductions153 through reduced site management, travel, and streamlined data collection.

- Empirical evidence: ADAPTABLE trial achieved $929 (95% CI: $929-$1,400)/patient in routine US settings. Harvard meta-analysis of 108 pragmatic trials found median cost of $97 (95% CI: $19-$478)/patient.

- Our estimate: $929 (95% CI: $97-$3,000)/patient (vs. $41,000 (95% CI: $20,000-$120,000) traditional). This deliberately uses ADAPTABLE as a conservative baseline; actual costs may be lower.

- Confidence interval captures uncertainty from complex chronic disease trials to highly efficient EHR-integrated designs.

The annual gross R&D savings can be calculated as:

\[ S_{\text{annual}} = \alpha \cdot R_d \]

Where:

- \(\alpha \in [0,1]\) is the cost reduction percentage (as decimal)

- \(R_d\) = $60 billion (95% CI: $50 billion-$75 billion) global clinical trial spending

Base Case Calculation:

Using 97.7% (95% CI: 97%-99%) cost reduction (pragmatic trial costs of $929 (95% CI: $97-$3,000) vs traditional $41,000 (95% CI: $20,000-$120,000)):

\[

\begin{gathered}

Benefit_{RD,ann} \\

= Spending_{trials} \times Reduce_{pct} \\

= \$60B \times 97.7\% \\

= \$58.6B

\end{gathered}

\]

where:

\[

\begin{gathered}

Reduce_{pct} \\

= 1 - \frac{Cost_{pragmatic,pt}}{Cost_{P3,pt}} \\

= 1 - \frac{\$929}{\$41K} \\

= 97.7\%

\end{gathered}

\]

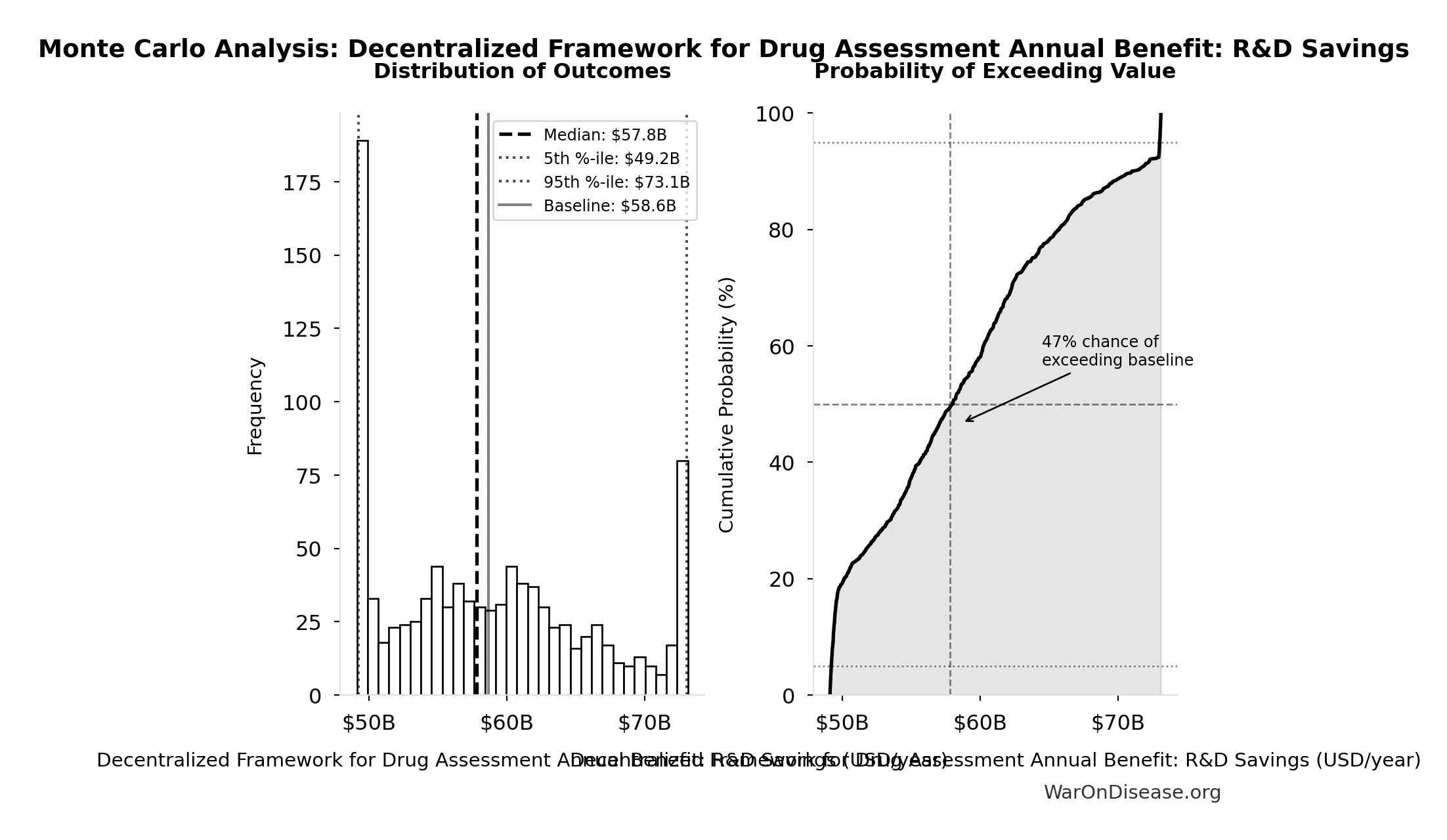

Uncertainty Analysis - R&D Savings:

Simulation Results Summary: Decentralized Framework for Drug Assessment Annual Benefit: R&D Savings

| Statistic | Value |

|---|---|

| Baseline (deterministic) | $58.6 billion |

| Mean (expected value) | $58.8 billion |

| Median (50th percentile) | $57.8 billion |

| Standard Deviation | $7.66 billion |

| 90% Range (5th-95th percentile) | [$49.2 billion, $73.1 billion] |

The histogram shows the distribution of Decentralized Framework for Drug Assessment Annual Benefit: R&D Savings across 10,000 Monte Carlo simulations. The CDF (right) shows the probability of the outcome exceeding any given value, which is useful for risk assessment.

Post-Safety Efficacy Lag Elimination

Relative Magnitude

Efficacy lag elimination (8.2 years (95% CI: 4.85 years-11.5 years)) is the smaller of the two timeline shift components. Discovery acceleration from 12.3x (95% CI: 4.2x-61.4x) trial capacity contributes 204 years (95% CI: 123 years-350 years), over 10× more health impact. This section details the efficacy lag component; see The Discovery Capacity Model for the dominant component.

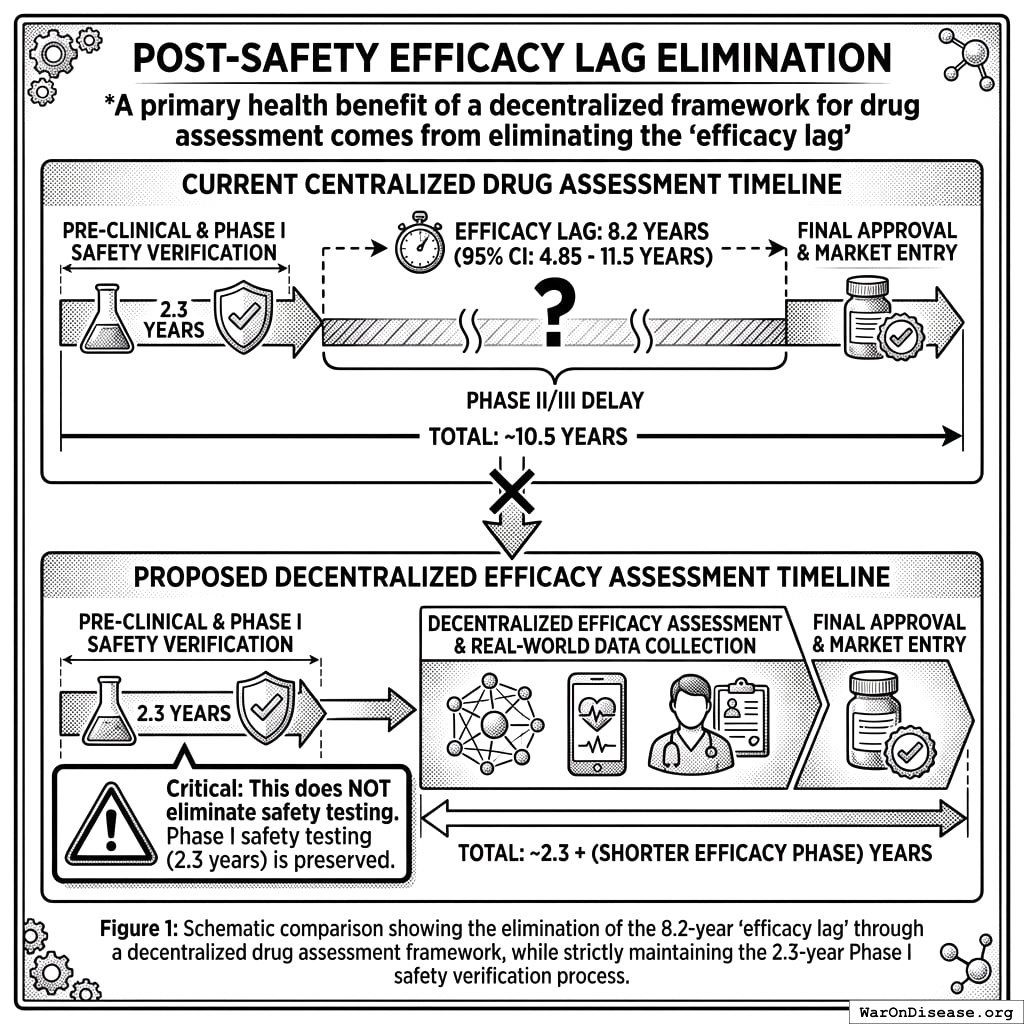

One of two health benefits of a decentralized FDA comes from eliminating the “efficacy lag”, the 8.2 years (95% CI: 4.85 years-11.5 years) Phase II/III delay between Phase I safety verification and final approval. Critical: This does NOT eliminate safety testing. Phase I safety testing (2.3 years) is preserved.

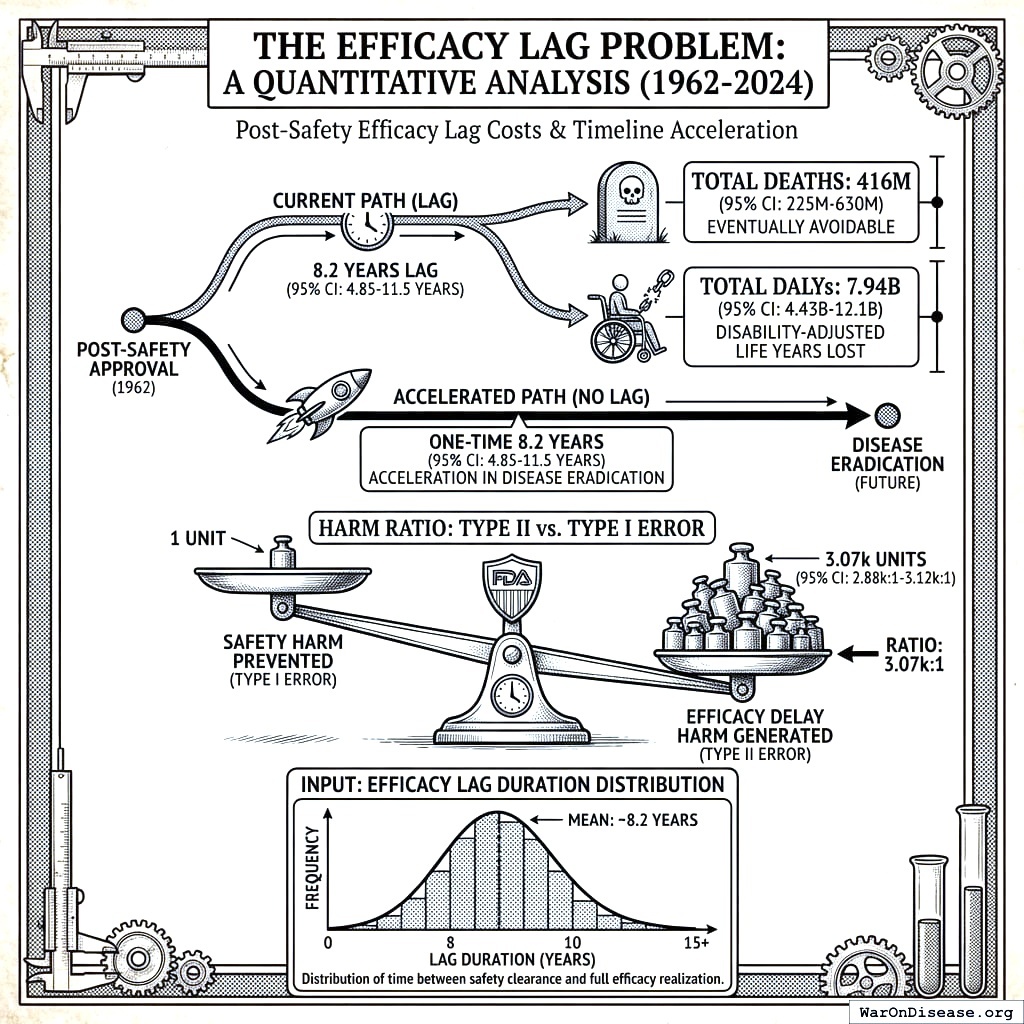

The Efficacy Lag Problem

A comprehensive quantitative analysis of post-safety efficacy lag costs (1962-2024) found:

- Total Deaths: 416 million deaths (95% CI: 225 million deaths-630 million deaths) eventually avoidable deaths over 8.2 years (95% CI: 4.85 years-11.5 years) efficacy lag (1962-2024)

- Total DALYs: 7.94 billion Disability-Adjusted Life Years lost

- Total Timeline Shift: One-time 8.2 years (95% CI: 4.85 years-11.5 years) acceleration in disease eradication

The analysis shows that for every 1 unit of harm the FDA prevents through safety testing, it generates 3,068 (95% CI: 2,878-3,125) units of harm through efficacy delay (Type II vs. Type I error ratio).

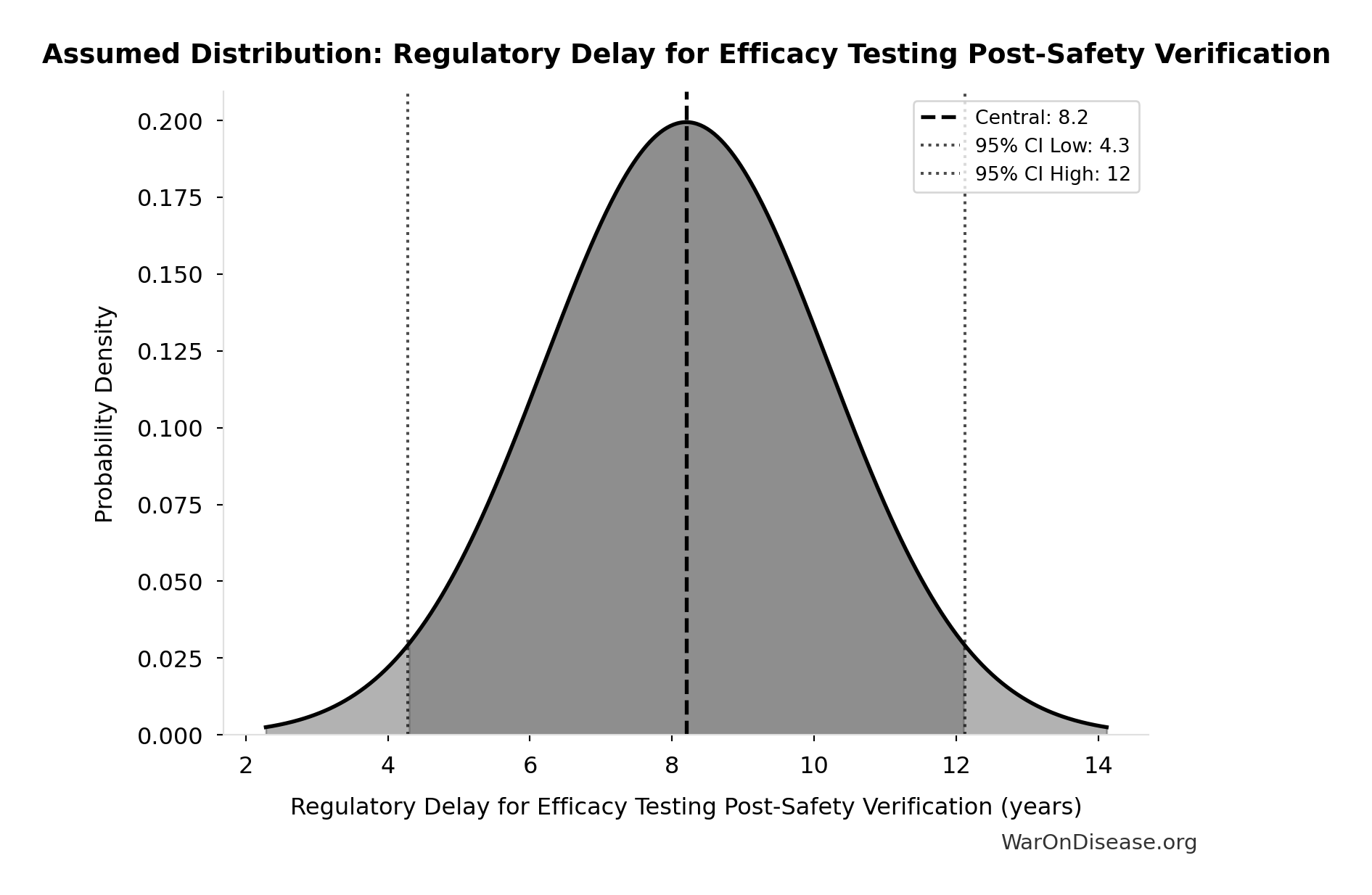

Input: Efficacy Lag Duration Distribution

This chart shows the assumed probability distribution for this parameter. The shaded region represents the 95% confidence interval where we expect the true value to fall.

How a Decentralized Framework Eliminates the Efficacy Lag

Such a framework provides provisional access post-Phase I via trial participation:

- Phase I Safety Testing: Maintained at 2.3 years (no change)

- Post-Phase I Access: Patients can access drugs through trial participation immediately after safety verification

- Continuous Efficacy Monitoring: Real-world evidence replaces the 8.2 years (95% CI: 4.85 years-11.5 years) pre-market efficacy delay

This eliminates the post-safety efficacy lag (the Phase II/III portion, while preserving Phase I safety testing) by enabling real-world evidence collection during trials.

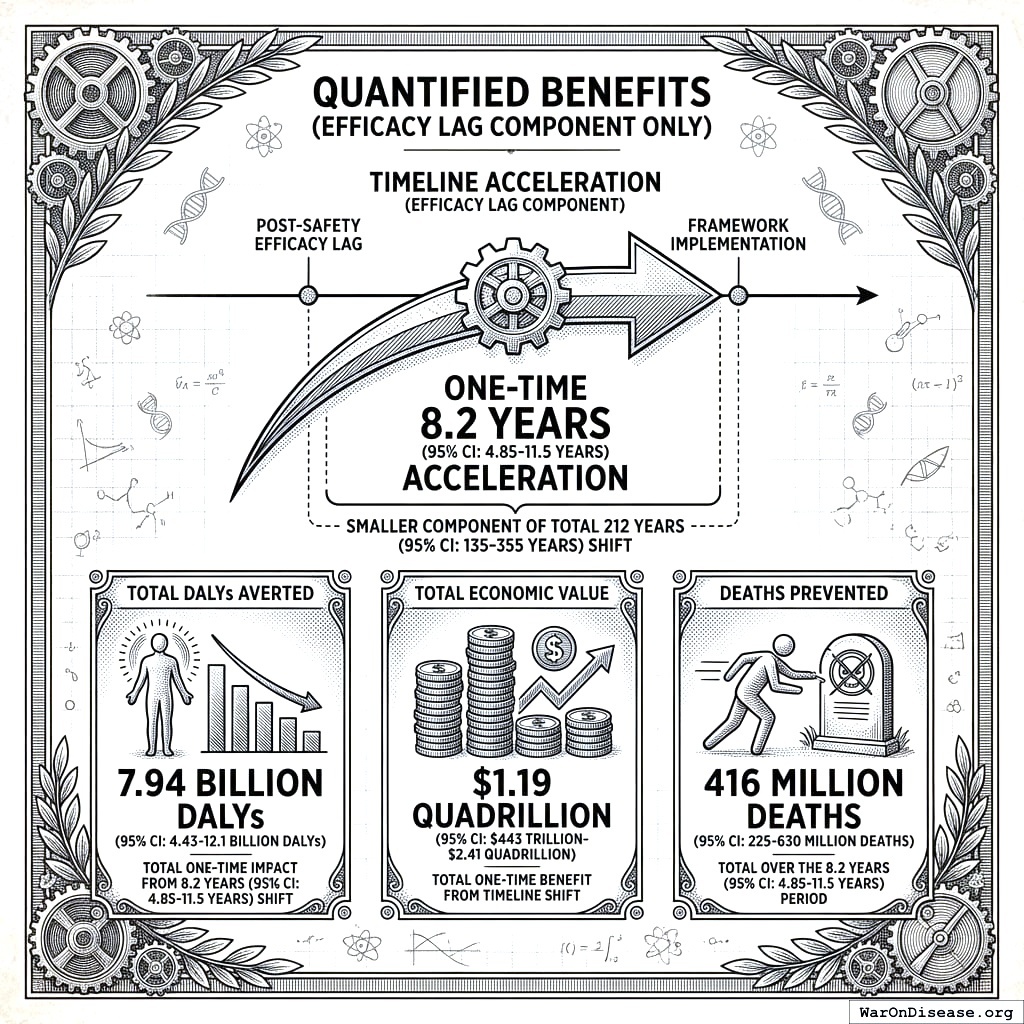

Quantified Benefits (Efficacy Lag Component Only)

The elimination of the post-safety efficacy lag by such a framework achieves a one-time 8.2 years (95% CI: 4.85 years-11.5 years) timeline acceleration (the smaller component of the total 212 years (95% CI: 135 years-355 years) shift):

- Total DALYs Averted: 7.94 billion DALYs (95% CI: 4.43 billion DALYs-12.1 billion DALYs) (total one-time impact from 8.2 years (95% CI: 4.85 years-11.5 years) timeline shift)

- Total Economic Value: $1.19 quadrillion (95% CI: $443 trillion-$2.41 quadrillion) (7.94 billion DALYs (95% CI: 4.43 billion DALYs-12.1 billion DALYs) at $150,000/DALY, cumulative over 8.2 years (95% CI: 4.85 years-11.5 years) of accelerated treatment access)

- Deaths Prevented: 416 million deaths (95% CI: 225 million deaths-630 million deaths) (total over the 8.2 years (95% CI: 4.85 years-11.5 years) period)

\[

DALYs_{lag} = YLL_{lag} + YLD_{lag} = 7.07B + 873M = 7.94B

\]

where:

\[

\begin{gathered}

YLL_{lag} \\

= Deaths_{lag} \times (LE_{global} - Age_{death,delay}) \\

= 416M \times (79 - 62) \\

= 7.07B

\end{gathered}

\]

where:

\[

\begin{gathered}

Deaths_{lag} \\

= T_{lag} \times Deaths_{disease,daily} \times 338 \\

= 8.2 \times 150{,}000 \times 338 \\

= 416M

\end{gathered}

\]

where:

\[

\begin{gathered}

YLD_{lag} \\

= Deaths_{lag} \times T_{suffering} \times DW_{chronic} \\

= 416M \times 6 \times 0.35 \\

= 873M

\end{gathered}

\]

\[

\begin{gathered}

Value_{lag} \\

= DALYs_{lag} \times Value_{QALY} \\

= 7.94B \times \$150K \\

= \$1190T

\end{gathered}

\]

where:

\[

DALYs_{lag} = YLL_{lag} + YLD_{lag} = 7.07B + 873M = 7.94B

\]

where:

\[

\begin{gathered}

YLL_{lag} \\

= Deaths_{lag} \times (LE_{global} - Age_{death,delay}) \\

= 416M \times (79 - 62) \\

= 7.07B

\end{gathered}

\]

where:

\[

\begin{gathered}

Deaths_{lag} \\

= T_{lag} \times Deaths_{disease,daily} \times 338 \\

= 8.2 \times 150{,}000 \times 338 \\

= 416M

\end{gathered}

\]

where:

\[

\begin{gathered}

YLD_{lag} \\

= Deaths_{lag} \times T_{suffering} \times DW_{chronic} \\

= 416M \times 6 \times 0.35 \\

= 873M

\end{gathered}

\]

\[ \begin{gathered} Deaths_{lag} \\ = T_{lag} \times Deaths_{disease,daily} \times 338 \\ = 8.2 \times 150{,}000 \times 338 \\ = 416M \end{gathered} \]

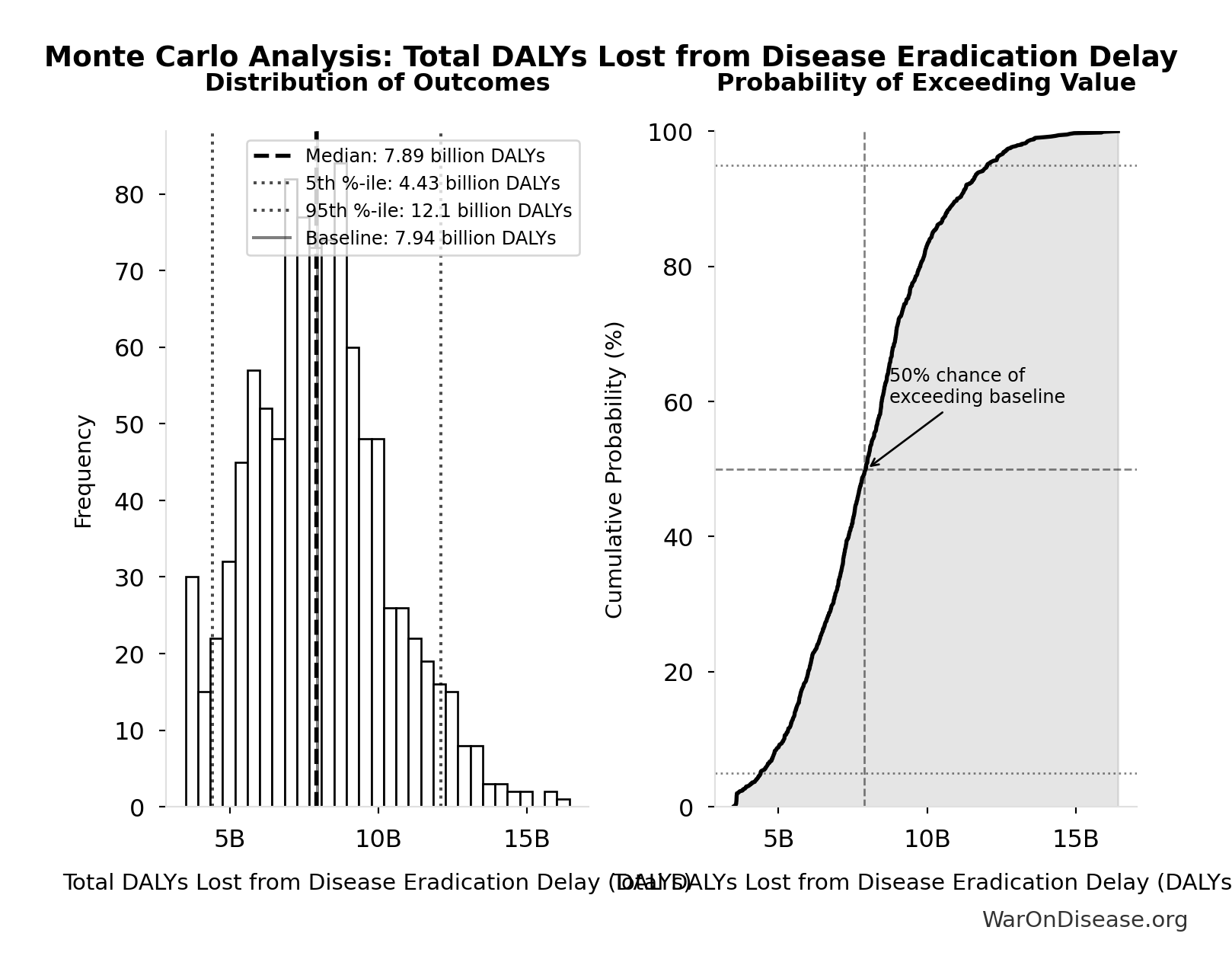

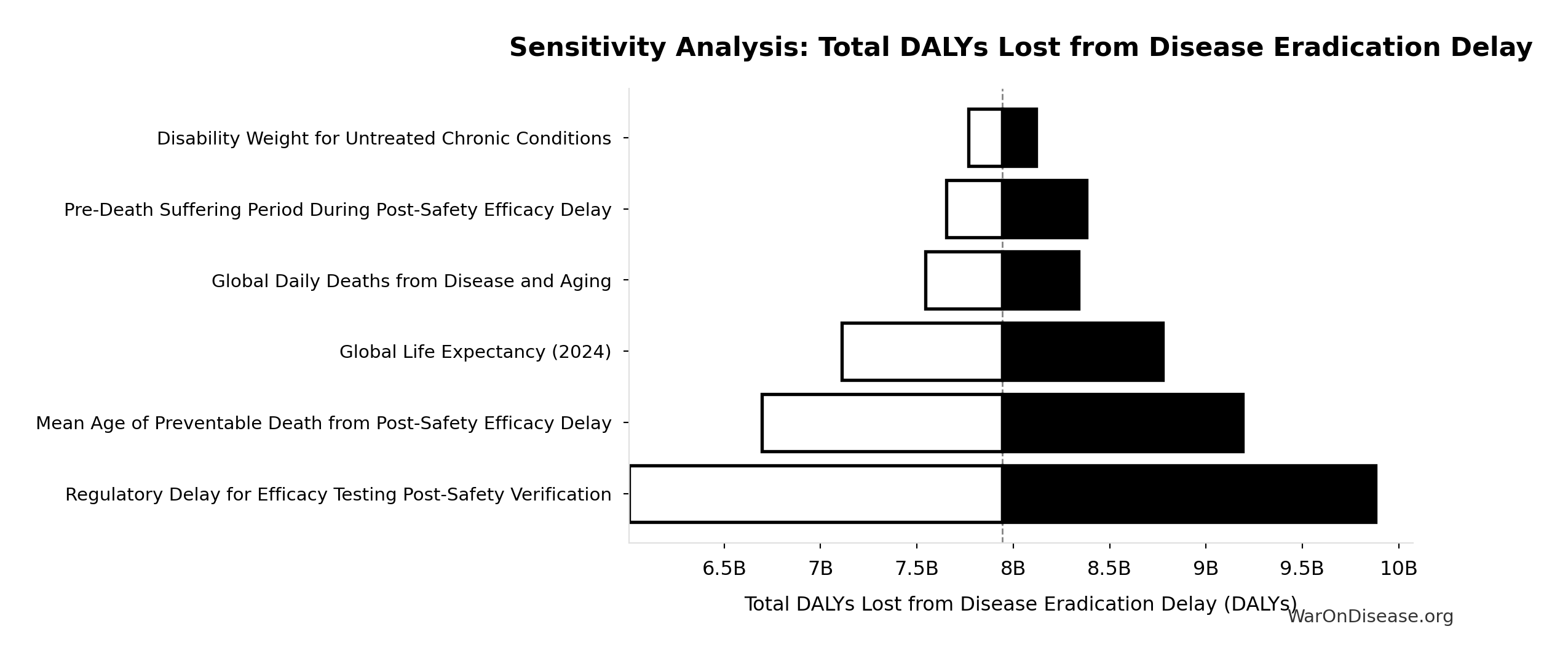

Efficacy Lag Elimination - Uncertainty Analysis

Simulation Results Summary: Total DALYs Lost from Disease Eradication Delay

| Statistic | Value |

|---|---|

| Baseline (deterministic) | 7.94 billion |

| Mean (expected value) | 8.05 billion |

| Median (50th percentile) | 7.89 billion |

| Standard Deviation | 2.31 billion |

| 90% Range (5th-95th percentile) | [4.43 billion, 12.1 billion] |

The histogram shows the distribution of Total DALYs Lost from Disease Eradication Delay across 10,000 Monte Carlo simulations. The CDF (right) shows the probability of the outcome exceeding any given value, which is useful for risk assessment.

This represents the health benefits from eliminating the post-safety efficacy lag only. The efficacy lag (8.2 years (95% CI: 4.85 years-11.5 years)) is one of two components of the total timeline shift:

- Efficacy lag elimination: 8.2 years (95% CI: 4.85 years-11.5 years) (this section)

- Trial capacity/discovery acceleration: 204 years (95% CI: 123 years-350 years) (from 12.3x (95% CI: 4.2x-61.4x) more trials)

The combined total timeline shift is 212 years (95% CI: 135 years-355 years), yielding 10.7 billion deaths (95% CI: 7.4 billion deaths-16.2 billion deaths) and 565 billion DALYs (95% CI: 361 billion DALYs-877 billion DALYs). See The Receipts for the combined totals.

For detailed methodology and assumptions on efficacy lag, see https://invisible-graveyard.warondisease.org.

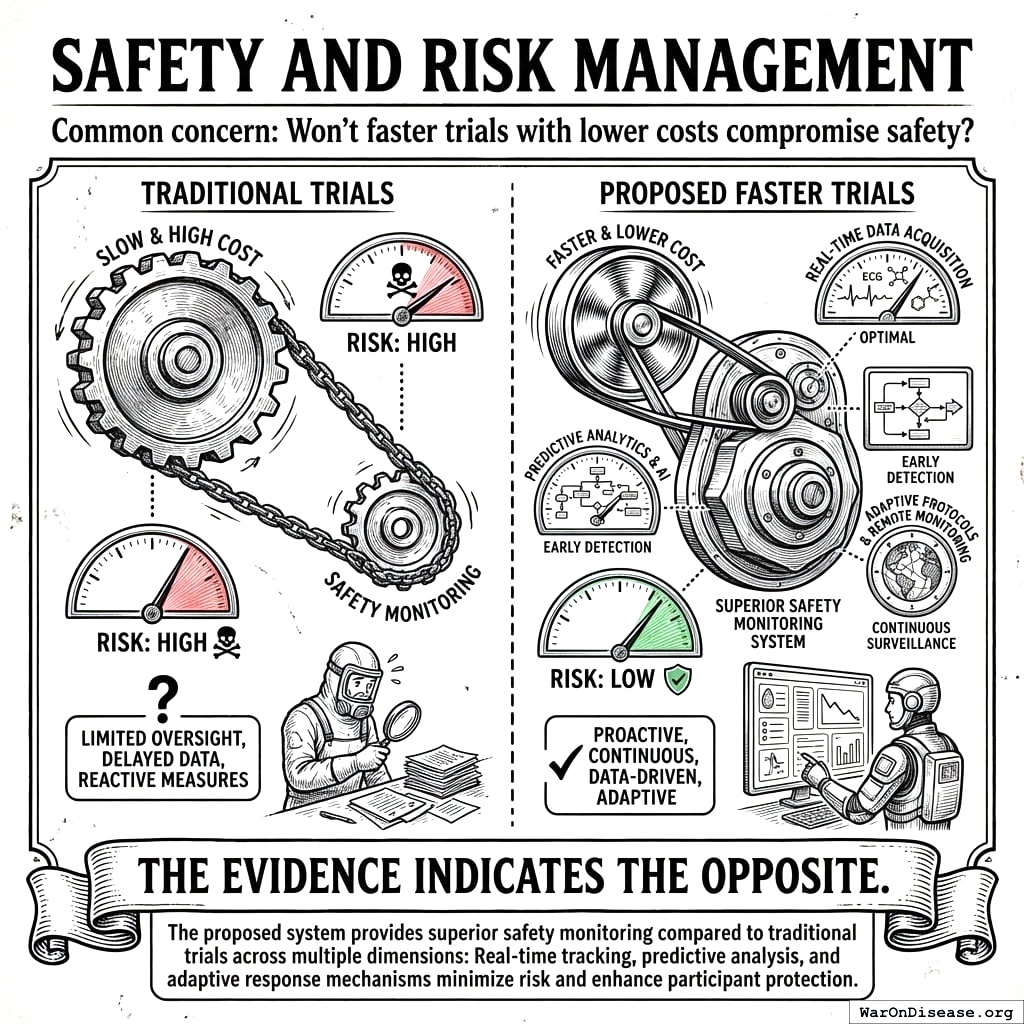

Safety and Risk Management

Common concern: Won’t faster trials with lower costs compromise safety?

The evidence indicates the opposite. The proposed system provides superior safety monitoring compared to traditional trials across multiple dimensions.

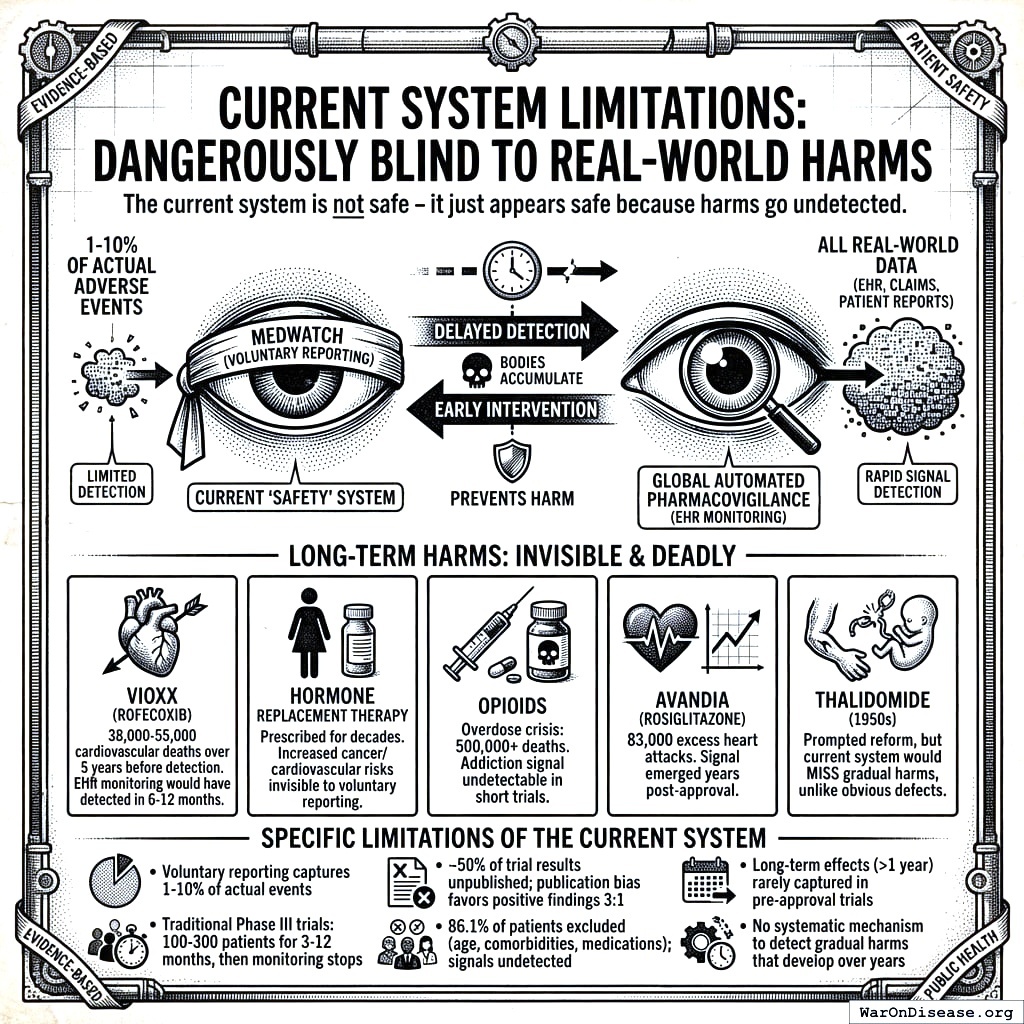

Current System Limitations: Dangerously Blind to Real-World Harms

Caution

The current system is not safe - it just appears safe because harms go undetected.

The FDA’s voluntary adverse event reporting system (MedWatch) captures only 1-10% of actual adverse events. Long-term harms that develop gradually over years - the most insidious and deadly kind - are virtually invisible:

- Vioxx (rofecoxib): Caused 38,000-55,000 cardiovascular deaths over 5 years before detection through voluntary reporting. With automated EHR pharmacovigilance, the elevated MI risk would have been detected within 6-12 months.

- Hormone Replacement Therapy: Prescribed for decades before the Women’s Health Initiative revealed increased cancer and cardiovascular risk - risks invisible to voluntary reporting

- Opioids: The overdose crisis killed 500,000+ Americans; the addiction signal was undetectable in short trials with cherry-picked populations

- Avandia (rosiglitazone): 83,000 excess heart attacks estimated before restrictions; signal emerged years post-approval

- Thalidomide (1950s): The disaster that prompted regulatory reform - yet the current system would still miss a thalidomide-like harm if it manifested gradually rather than as obvious birth defects

The current “safety” system doesn’t prevent harm - it delays detection until bodies accumulate. A global automated pharmacovigilance system with continuous EHR monitoring would detect these signals in months, not years or decades.

Specific limitations of the current system:

- Voluntary adverse event reporting captures only 1-10% of actual events

- Traditional Phase III trials test 100-300 patients for 3-12 months, then monitoring stops

- Approximately 50% of trial results go unpublished, with publication bias favoring positive findings 3:1

- 86.1% of patients excluded due to age, comorbidities, or medications - safety signals in these populations go undetected

- Long-term effects (>1 year) rarely captured in pre-approval trials

- No systematic mechanism to detect gradual harms that develop over years

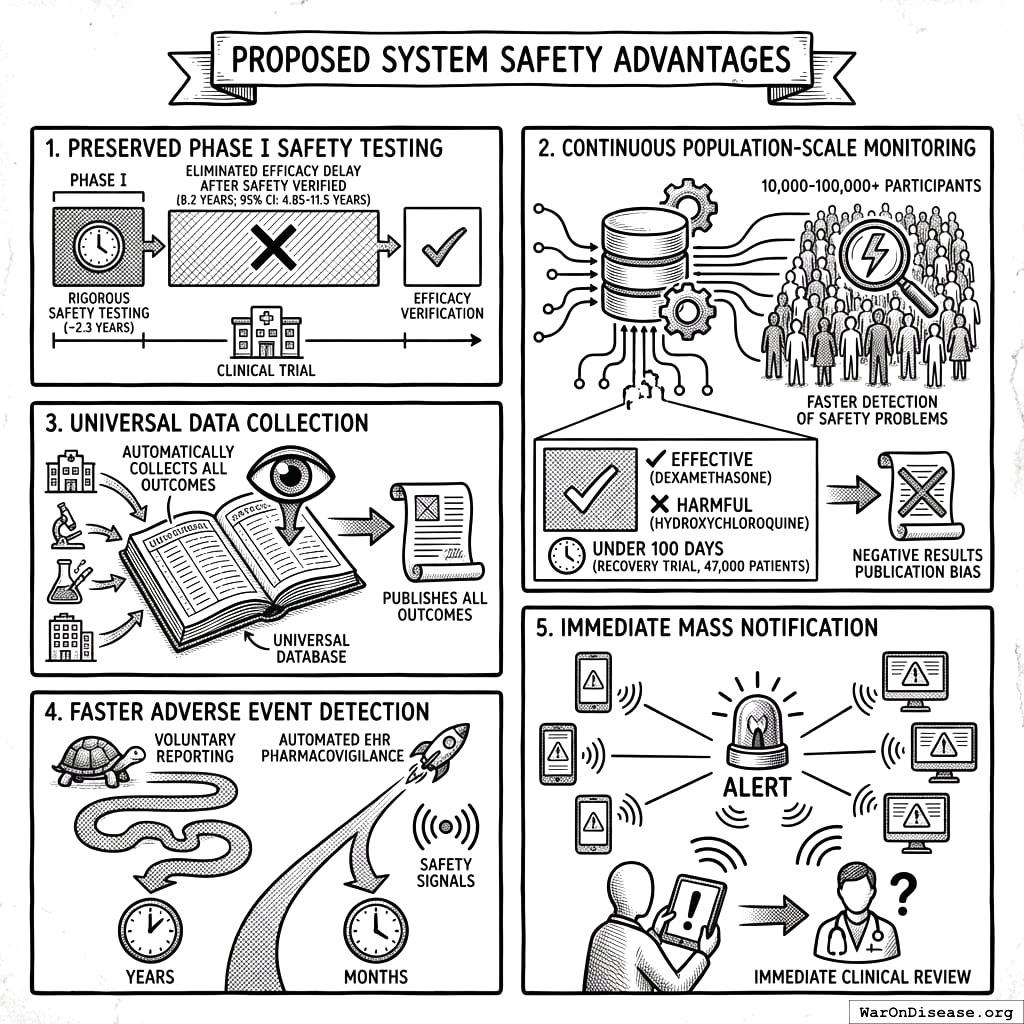

Proposed System Safety Advantages

- Preserved Phase I Safety Testing: Rigorous Phase I safety testing (~2.3 years) is maintained. What changes is eliminating the 8.2 years (95% CI: 4.85 years-11.5 years) efficacy delay after safety is verified.

Continuous Population-Scale Monitoring: Pragmatic trials with 10,000-100,000+ participants monitored continuously through EHR integration detect safety problems faster than small, time-limited traditional trials. The RECOVERY trial identified both effective treatments (dexamethasone) and harmful ones (hydroxychloroquine) in under 100 days with 47,000 patients.

Universal Data Collection: The system automatically collects and publishes outcome data on all treatments, eliminating the publication bias that currently hides negative results.

Faster Adverse Event Detection: Automated EHR pharmacovigilance detects safety signals in months rather than the years required by voluntary reporting systems.

Immediate Mass Notification: When safety signals are detected, all patients taking the drug receive automated alerts through patient portals, enabling immediate clinical review.

Detection Timeline Comparison

The following table quantifies how automated EHR monitoring would have changed outcomes for major drug safety disasters:

| Drug | Harm | Voluntary Reporting Detection | Projected EHR Detection | Deaths During Delay |

|---|---|---|---|---|

| Vioxx | Cardiovascular events | 5 years (1999-2004) | 6-12 months | 38,000-55,000 |

| Avandia | Heart attacks | 8 years post-approval | 12-18 months | ~83,000 excess events |

| Opioids | Addiction/overdose | Decades | 2-3 years | 500,000+ deaths |

| HRT | Cancer/cardiovascular | 40+ years | 3-5 years | Unknown (millions affected) |

Why the difference? Automated EHR surveillance compares treated patients to matched controls continuously. The Vioxx cardiovascular signal would trigger statistical alerts after ~5,000 prescriptions (1.2% elevated event rate vs. expected background). Voluntary reporting requires doctors to notice, remember, and file paperwork, capturing only 1-10% of events.

Comparative Safety Surveillance

| Safety Dimension | Traditional Trials | Pragmatic Trials + EHR Monitoring |

|---|---|---|

| Sample size | 100-300 patients | 10,000-100,000+ patients |

| Patient selection | 86.1% excluded | All volunteers (real-world populations) |

| Monitoring duration | 3-12 months (then stops) | Continuous via EHR (indefinite) |

| Publication rate | ~50% unpublished | 100% automatically published |

| Adverse event detection | Voluntary reporting (1-10% capture) | Automated surveillance (100% capture) |

Pooled Liability Insurance

The framework includes pooled liability coverage for sponsors, reducing individual company risk while ensuring patient compensation for adverse events. This removes a major barrier to trial participation for smaller sponsors while maintaining accountability.

Type II Error Dominance: For every person protected from an unsafe drug (Type I error prevention), 3,068 (95% CI: 2,878-3,125) people die from delayed access to beneficial treatments (Type II errors). The current system prevents harm from unsafe drugs, but causes 3,068 (95% CI: 2,878-3,125) more deaths through delays. Phase I safety testing is preserved in this framework; the ratio quantifies the cost of the efficacy lag, not safety testing. (See Historical Validation for the thalidomide context.)

ROI Analysis for a Decentralized FDA

Monte Carlo Distributions

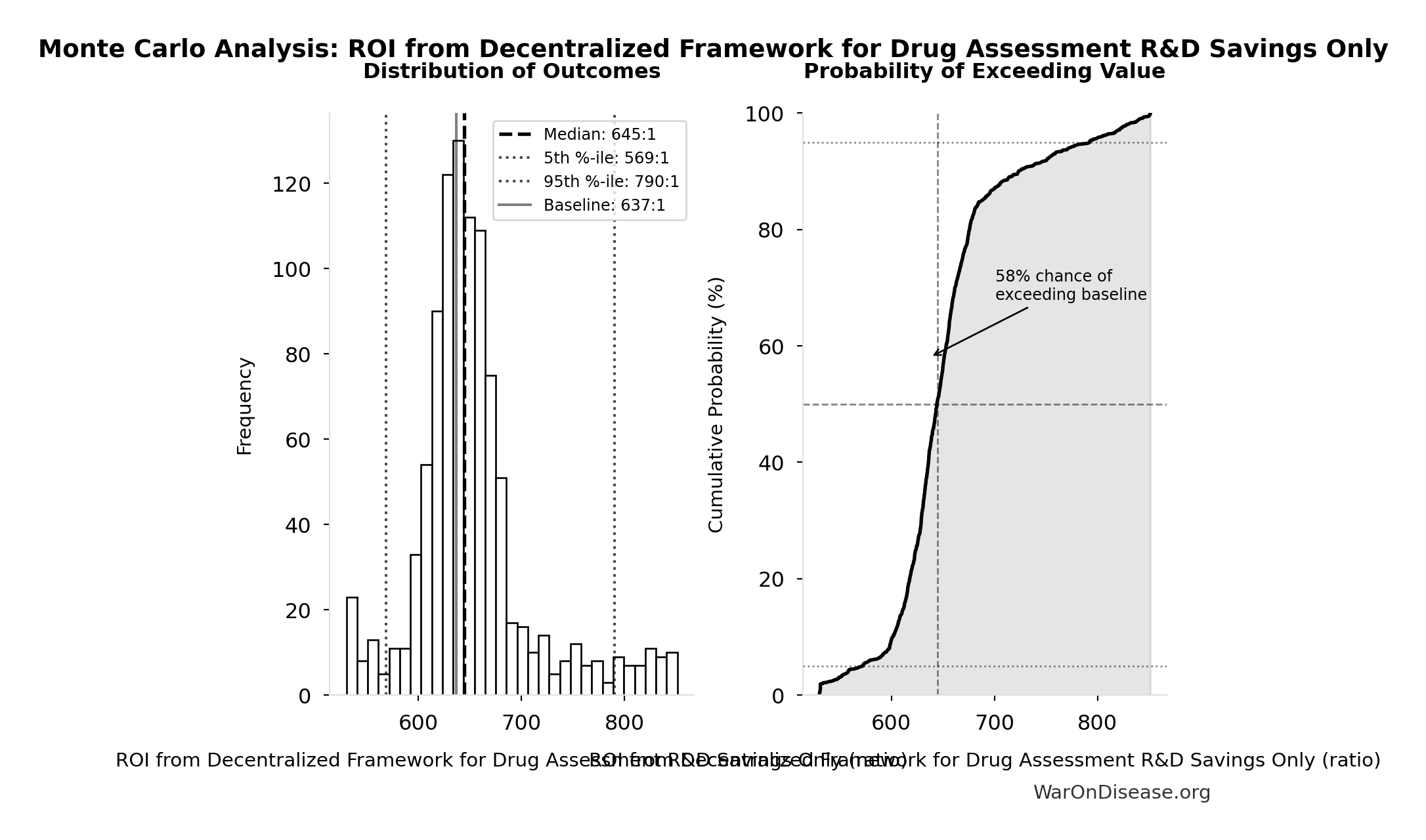

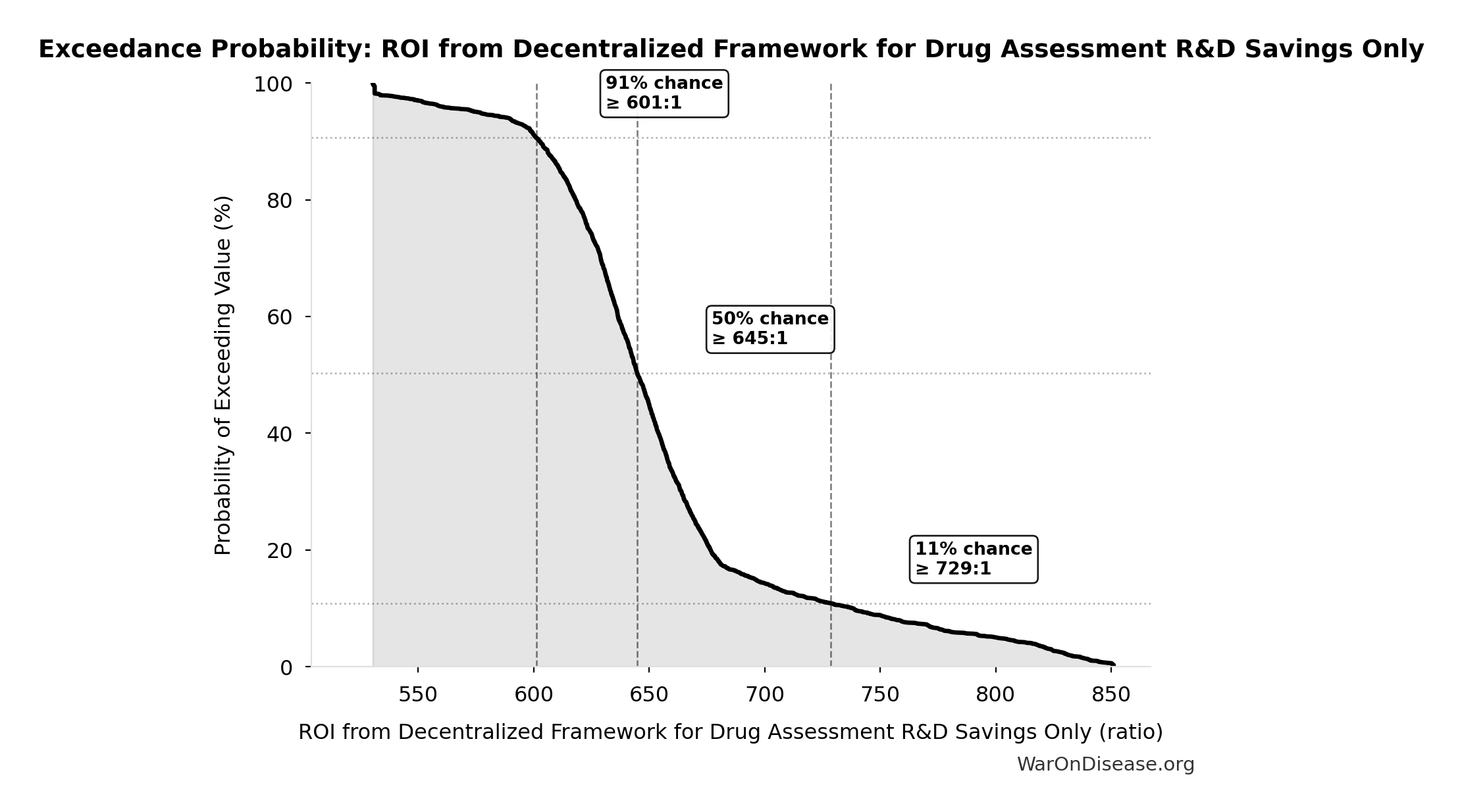

Simulation Results Summary: ROI from Decentralized Framework for Drug Assessment R&D Savings Only

| Statistic | Value |

|---|---|

| Baseline (deterministic) | 637:1 |

| Mean (expected value) | 653:1 |

| Median (50th percentile) | 645:1 |

| Standard Deviation | 58.4:1 |

| 90% Range (5th-95th percentile) | [569:1, 790:1] |

The histogram shows the distribution of ROI from Decentralized Framework for Drug Assessment R&D Savings Only across 10,000 Monte Carlo simulations. The CDF (right) shows the probability of the outcome exceeding any given value, which is useful for risk assessment.

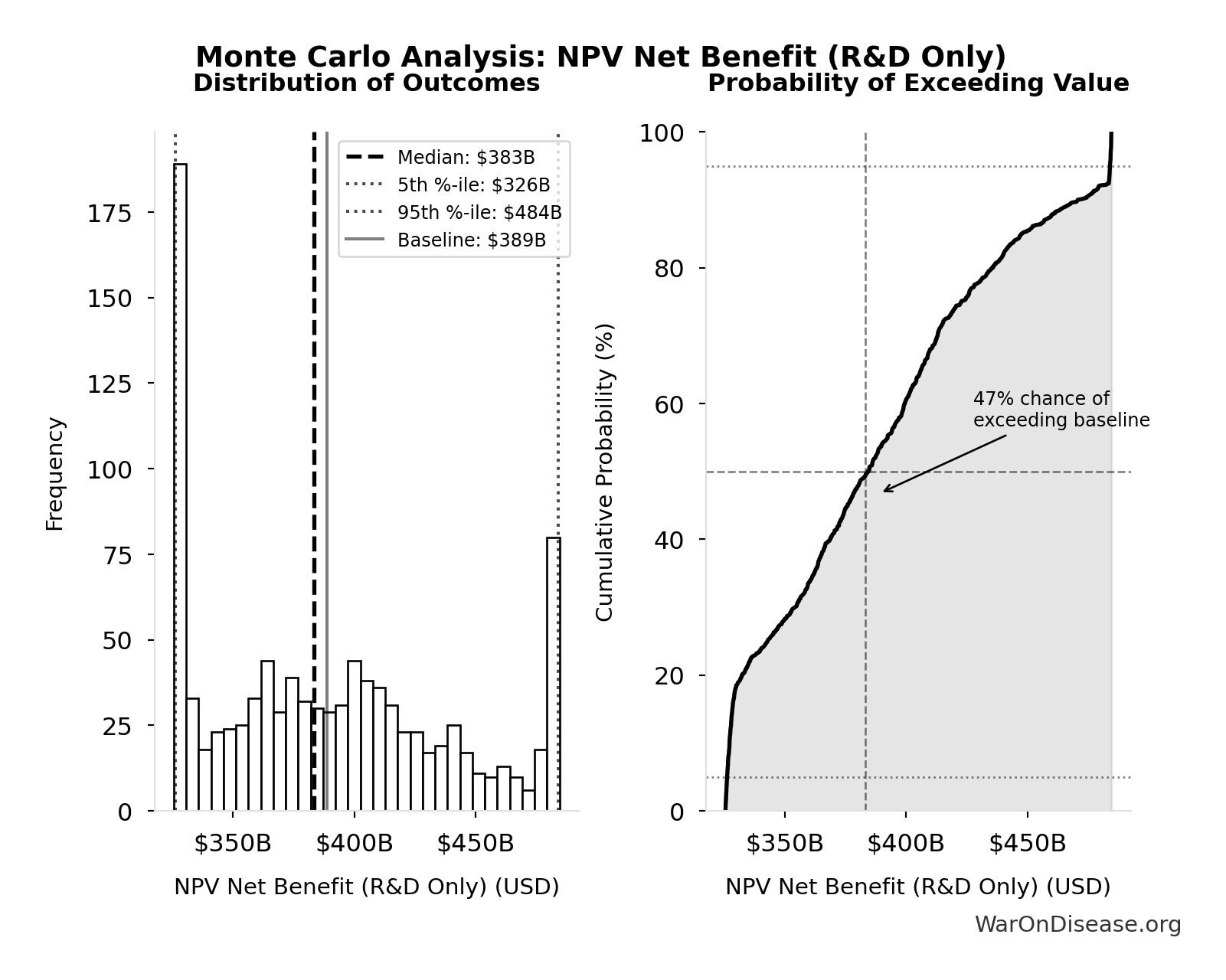

Simulation Results Summary: NPV Net Benefit (R&D Only)

| Statistic | Value |

|---|---|

| Baseline (deterministic) | $389 billion |

| Mean (expected value) | $390 billion |

| Median (50th percentile) | $383 billion |

| Standard Deviation | $50.7 billion |

| 90% Range (5th-95th percentile) | [$326 billion, $484 billion] |

The histogram shows the distribution of NPV Net Benefit (R&D Only) across 10,000 Monte Carlo simulations. The CDF (right) shows the probability of the outcome exceeding any given value, which is useful for risk assessment.

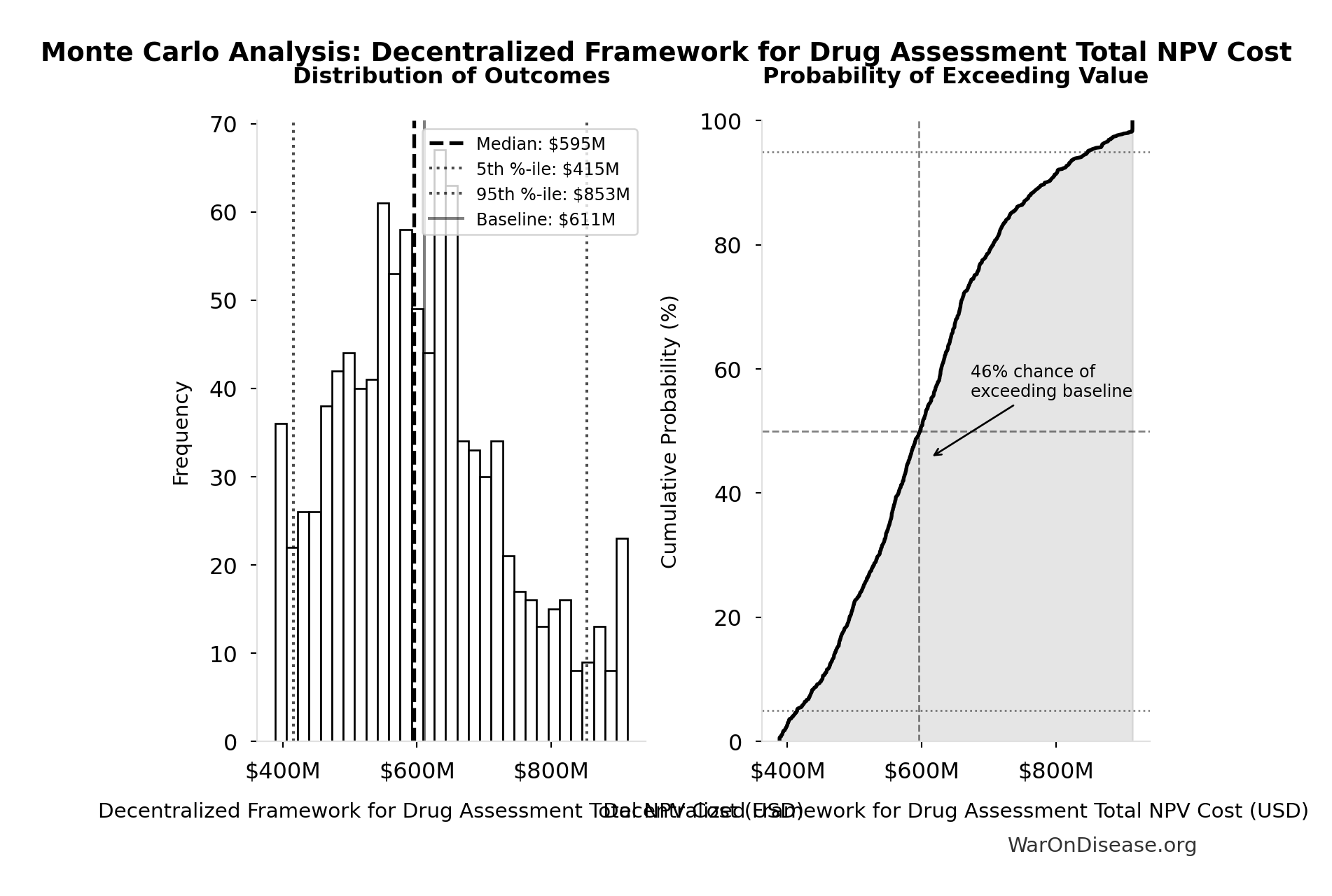

Simulation Results Summary: Decentralized Framework for Drug Assessment Total NPV Cost

| Statistic | Value |

|---|---|

| Baseline (deterministic) | $611 million |

| Mean (expected value) | $609 million |

| Median (50th percentile) | $595 million |

| Standard Deviation | $127 million |

| 90% Range (5th-95th percentile) | [$415 million, $853 million] |

The histogram shows the distribution of Decentralized Framework for Drug Assessment Total NPV Cost across 10,000 Monte Carlo simulations. The CDF (right) shows the probability of the outcome exceeding any given value, which is useful for risk assessment.

This exceedance probability chart shows the likelihood that ROI from Decentralized Framework for Drug Assessment R&D Savings Only will exceed any given threshold. Higher curves indicate more favorable outcomes with greater certainty.

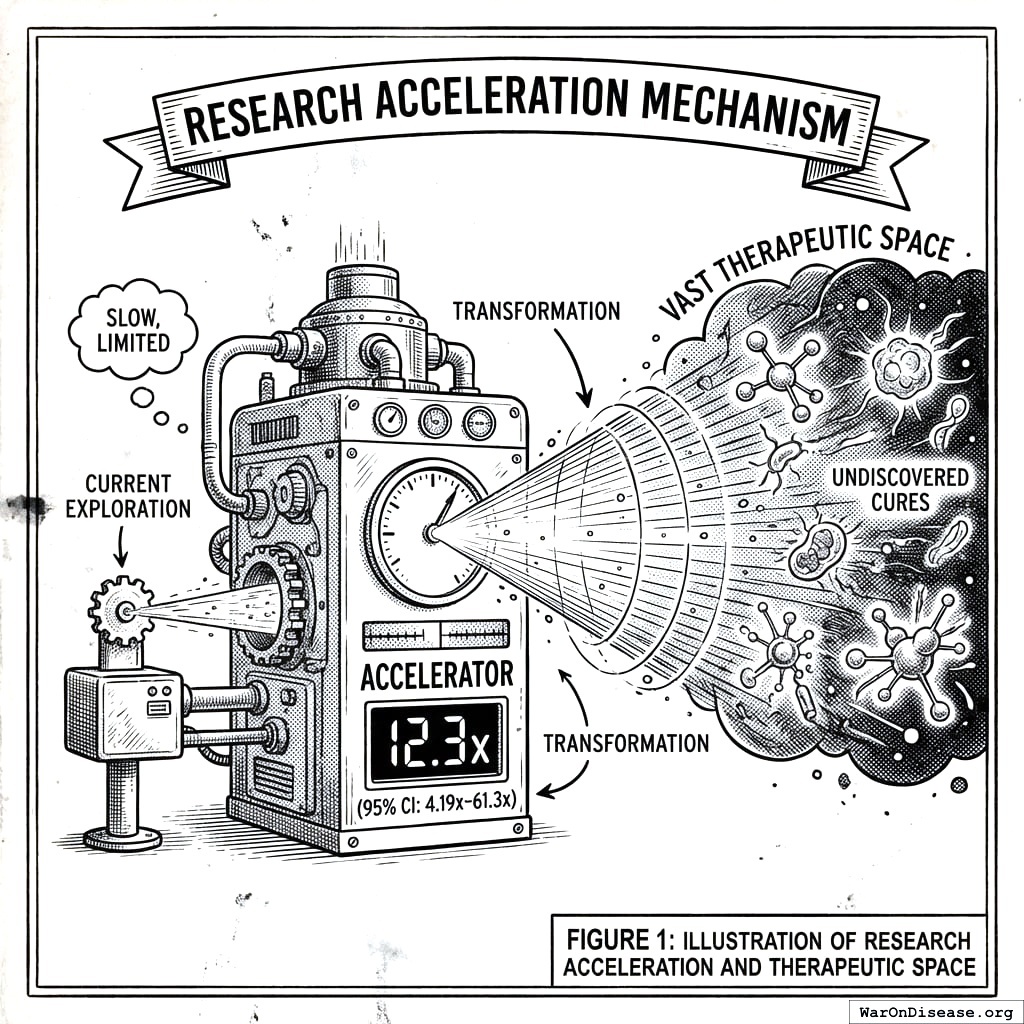

Research Acceleration Mechanism

The 12.3x (95% CI: 4.2x-61.4x) research acceleration transforms our ability to explore the vast therapeutic space where undiscovered cures already exist.

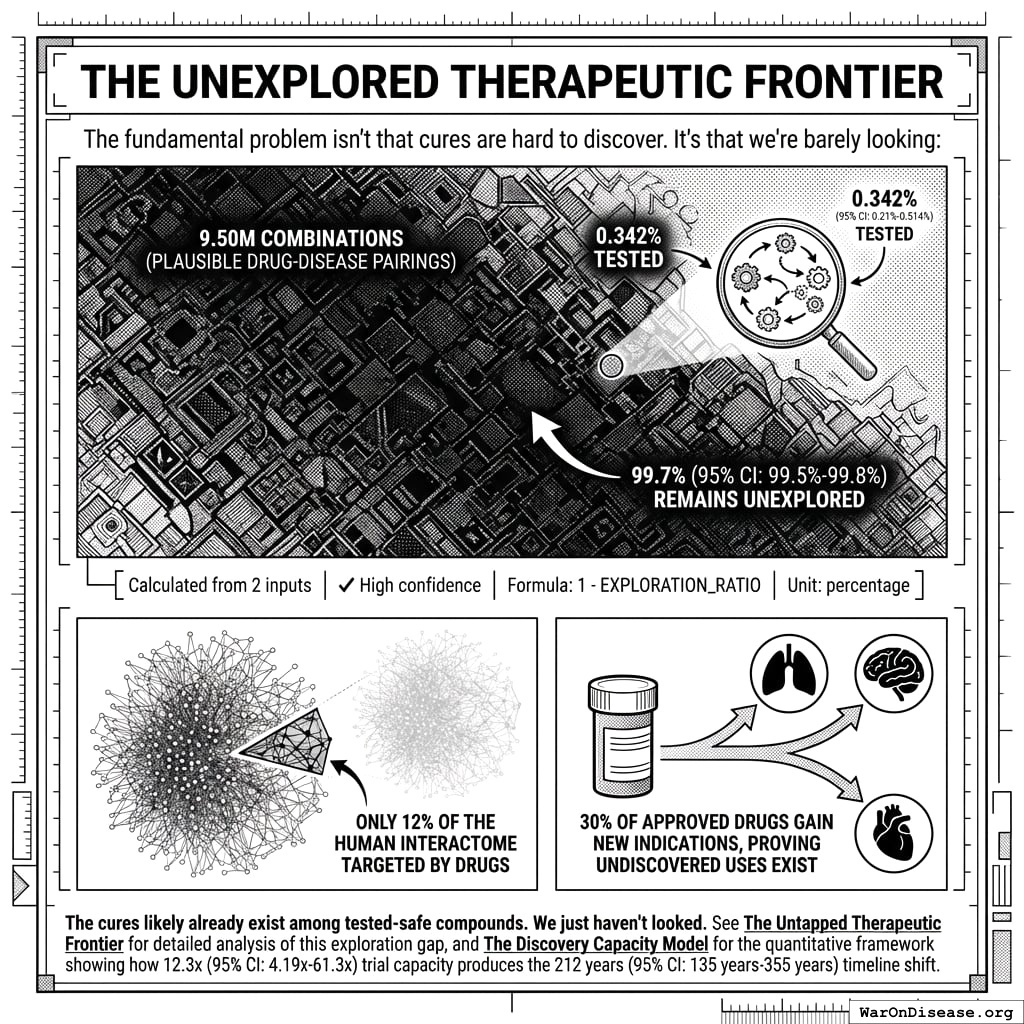

The Unexplored Therapeutic Frontier

The fundamental problem isn’t that cures are hard to discover. It’s that we’re barely looking:

- 9.5 million combinations (95% CI: 5.94 million combinations-13.9 million combinations) plausible drug-disease pairings exist (9,500 compounds (95% CI: 7,000 compounds-12,000 compounds) safe × 1,000 diseases (95% CI: 800 diseases-1,200 diseases))

- Only 0.342% (95% CI: 0%-1%) of these combinations have been tested - 99.7% (95% CI: 99%-100%) remains unexplored

- Only 12% of the human interactome has ever been targeted by drugs

- 30% of approved drugs gain new indications, proving undiscovered uses exist

\[

\begin{gathered}

Ratio_{explore} \\

= \frac{N_{tested}}{N_{combos}} \\

= \frac{32{,}500}{9.5M} \\

= 0.342\%

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{combos} \\

= N_{safe} \times N_{diseases,trial} \\

= 9{,}500 \times 1{,}000 \\

= 9.5M

\end{gathered}

\]

The cures likely already exist among tested-safe compounds. We just haven’t looked. See The Untapped Therapeutic Frontier for detailed analysis of this exploration gap, and The Discovery Capacity Model for the quantitative framework showing how 12.3x (95% CI: 4.2x-61.4x) trial capacity produces the 212 years (95% CI: 135 years-355 years) timeline shift.

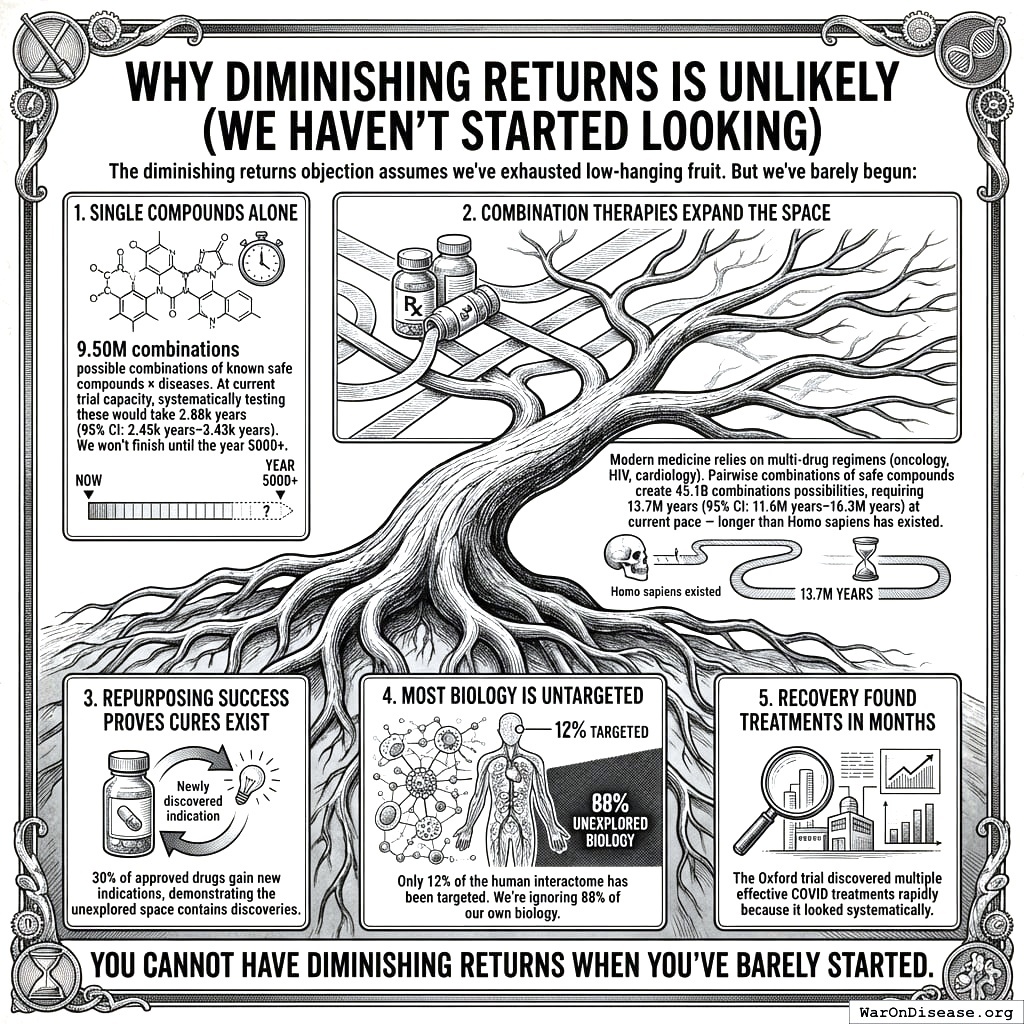

Addressing the Returns Question: Diminishing, Linear, or Compounding?

A common objection is that “more trials won’t produce proportionally more cures” - the diminishing returns hypothesis. This deserves serious consideration, but the evidence suggests the opposite may be true.

Why Diminishing Returns Is Unlikely (We Haven’t Started Looking)

The diminishing returns objection assumes we’ve exhausted low-hanging fruit. But we’ve barely begun:

- Single compounds alone: 9.5 million combinations (95% CI: 5.94 million combinations-13.9 million combinations) possible combinations of known safe compounds × diseases. At current trial capacity, systematically testing these would take 2,879 years (95% CI: 1,765 years-4,386 years). We won’t finish until the year 5000+.

\[

\begin{gathered}

T_{explore,safe} \\

= \frac{N_{combos}}{Trials_{ann,curr}} \\

= \frac{9.5M}{3{,}300} \\

= 2{,}880

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{combos} \\

= N_{safe} \times N_{diseases,trial} \\

= 9{,}500 \times 1{,}000 \\

= 9.5M

\end{gathered}

\]

Combination therapies expand the space: Modern medicine relies on multi-drug regimens (oncology, HIV, cardiology). Pairwise combinations of safe compounds create 45.1 billion combinations (95% CI: 21.5 billion combinations-81.5 billion combinations) possibilities, requiring 13.7 million years (95% CI: 6.5 million years-25.4 million years) at current pace - longer than Homo sapiens has existed.

Repurposing success proves cures exist: 30% of approved drugs gain new indications, demonstrating the unexplored space contains discoveries.

Most biology is untargeted: Only 12% of the human interactome has been targeted. We’re ignoring 88% of our own biology.

RECOVERY found treatments in months: The Oxford trial discovered multiple effective COVID treatments rapidly because it looked systematically.

You cannot have diminishing returns when you’ve barely started.

Mathematical Framework: When Would Diminishing Returns Dominate?

We can formalize the competing models to identify when diminishing returns would actually matter.

Model 1: Linear (Baseline)

\[ T_{discovered} = k_0 \cdot N_{trials} \]

Where \(k_0\) is the constant discovery rate (effective treatments per trial). This assumes the therapeutic space is sampled uniformly at random.

Model 2: Diminishing Returns (Pessimistic)

As we exhaust the therapeutic space, the hit rate decreases:

\[ k_{dim}(s) = k_0 \cdot (1 - s) \]

Where \(s = S_{explored}/S_{total}\) is the fraction of therapeutic space already tested. At current exploration (\(s < 0.01\)), this gives \(k_{dim} \approx 0.99 \cdot k_0\), virtually identical to linear.

Model 3: Learning/Compounding (Optimistic)

Each trial improves our biological models, increasing future hit rates:

\[ k_{learn}(n) = k_0 \cdot \left(1 + \alpha \cdot \ln(1 + n)\right) \]

Where \(\alpha\) is the learning coefficient and \(n\) is cumulative trials completed. Even modest learning (\(\alpha = 0.1\)) with 100,000 trials yields \(k_{learn} \approx 2.15 \cdot k_0\).

Model 4: Combined (Realistic)

Both effects operate simultaneously:

\[ k_{combined}(s, n) = k_0 \cdot (1 - s) \cdot \left(1 + \alpha \cdot \ln(1 + n)\right) \]

The Crossover Point: When Does Depletion Dominate Learning?

Diminishing returns dominates when the depletion factor exceeds the learning factor. Solving for the critical exploration fraction:

\[ s_{crossover} = 1 - \frac{1}{1 + \alpha \cdot \ln(1 + n)} \]

| Learning Coefficient (\(\alpha\)) | Trials Completed (\(n\)) | Crossover Exploration (\(s_{crossover}\)) |

|---|---|---|

| 0.05 (weak) | 100,000 | 37% |

| 0.10 (modest) | 100,000 | 53% |

| 0.15 (strong) | 100,000 | 63% |

Interpretation: Even with weak learning effects, diminishing returns only dominates after exploring 37%+ of therapeutic space. With modest learning, the crossover occurs at 53%+ exploration.

Timeline to Crossover:

At current exploration of 0.342% (95% CI: 0%-1%) (<1%), reaching the 53% crossover would require ~1,500 years at current pace or ~125 years with the framework. For combination therapies (45.1 billion combinations (95% CI: 21.5 billion combinations-81.5 billion combinations)), reaching 53% exploration would take millions of years.

Conclusion: For any plausible planning horizon, learning effects dominate. Diminishing returns is a theoretical concern for civilizations operating on multi-century timescales, not a practical constraint for the next 100+ years of medical research.

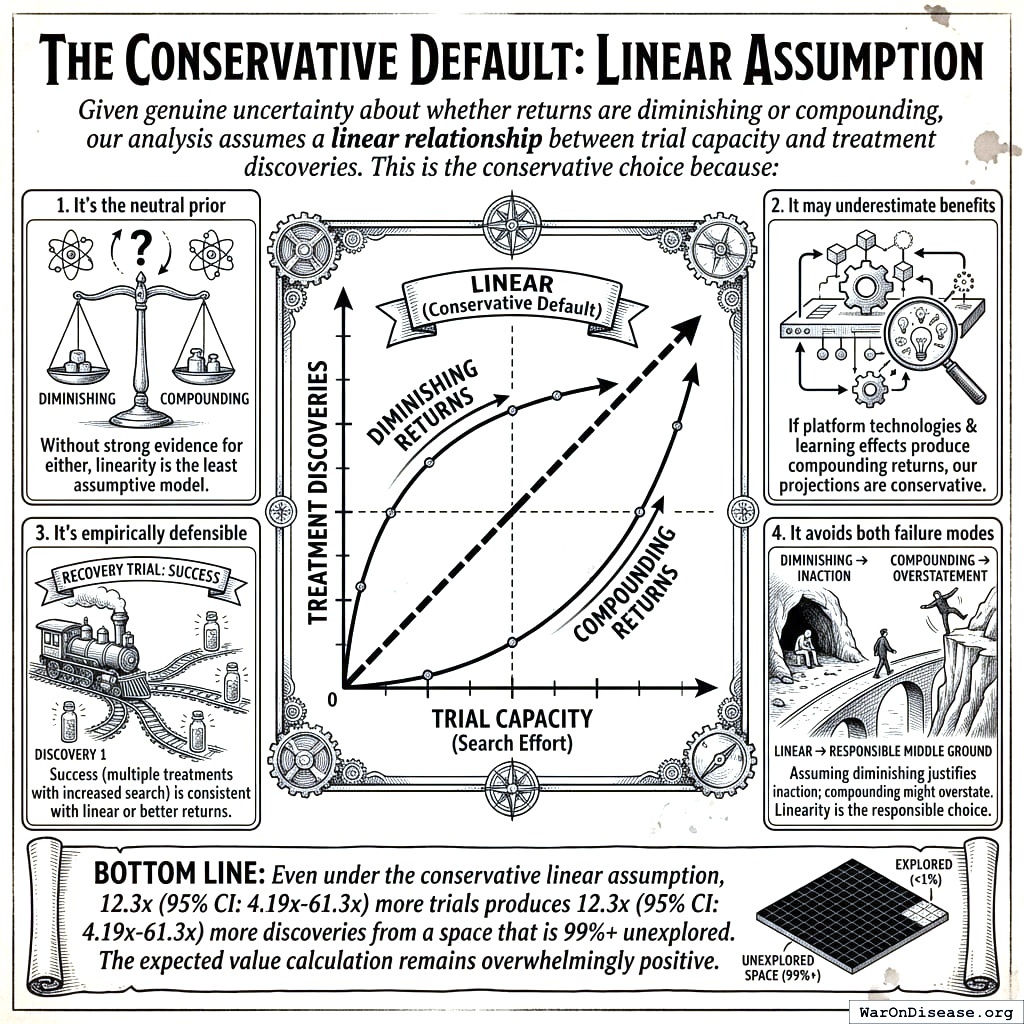

The Conservative Default: Linear Assumption

Given genuine uncertainty about whether returns are diminishing or compounding, our analysis assumes a linear relationship between trial capacity and treatment discoveries. This is the conservative choice because:

- It’s the neutral prior: Without strong evidence for either diminishing or compounding returns, linearity is the least assumptive model

- It may underestimate benefits: If platform technologies and learning effects produce compounding returns, our projections are conservative

- It’s empirically defensible: The RECOVERY trial’s success (multiple treatments found with increased search) is consistent with linear or better returns

- It avoids both failure modes: Assuming diminishing returns would justify inaction; assuming compounding returns might overstate benefits. Linearity is the responsible middle ground

Bottom line: Even under the conservative linear assumption, 12.3x (95% CI: 4.2x-61.4x) more trials produces 12.3x (95% CI: 4.2x-61.4x) more discoveries from a space that is 99%+ unexplored. The expected value calculation remains overwhelmingly positive.

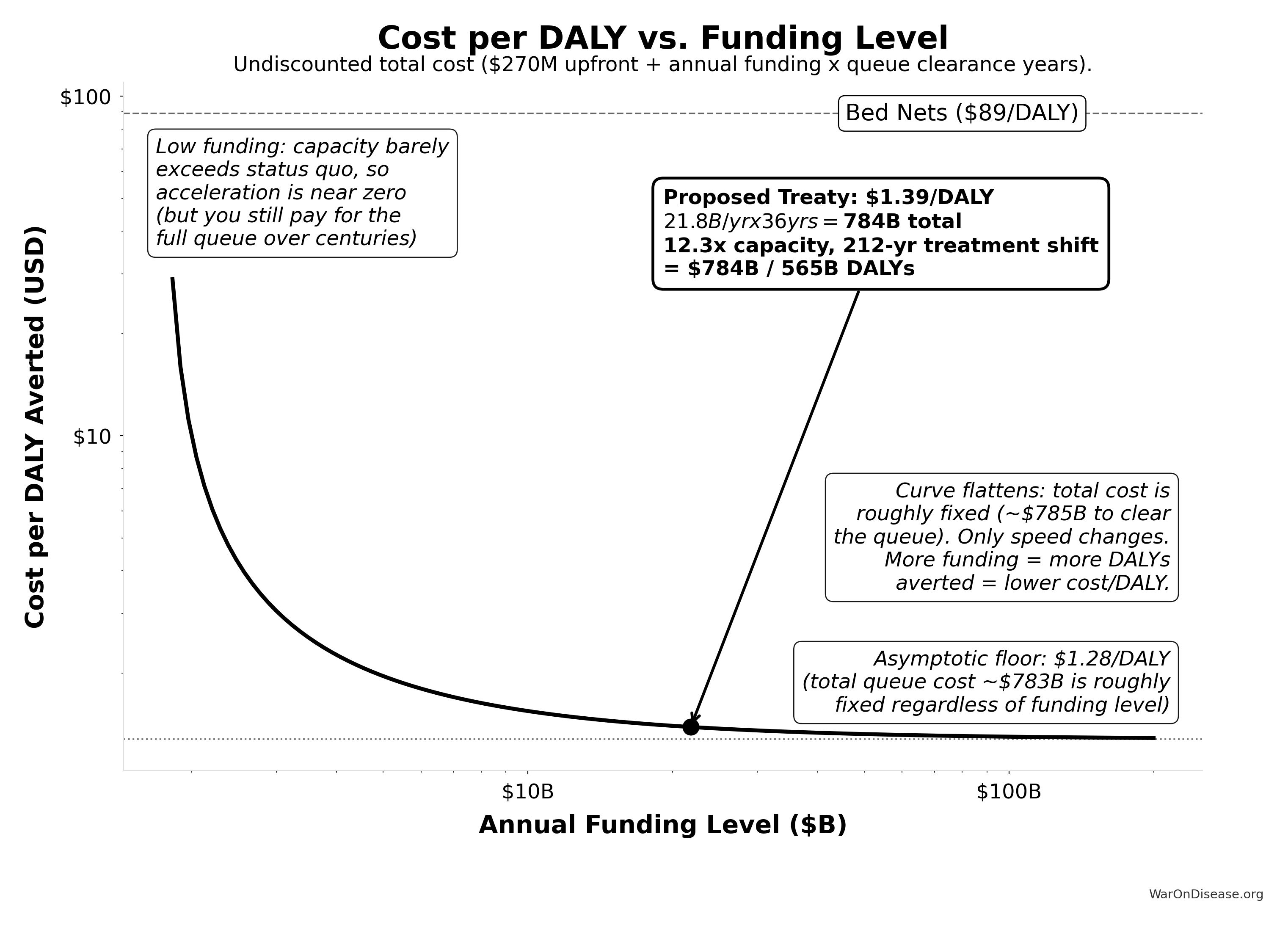

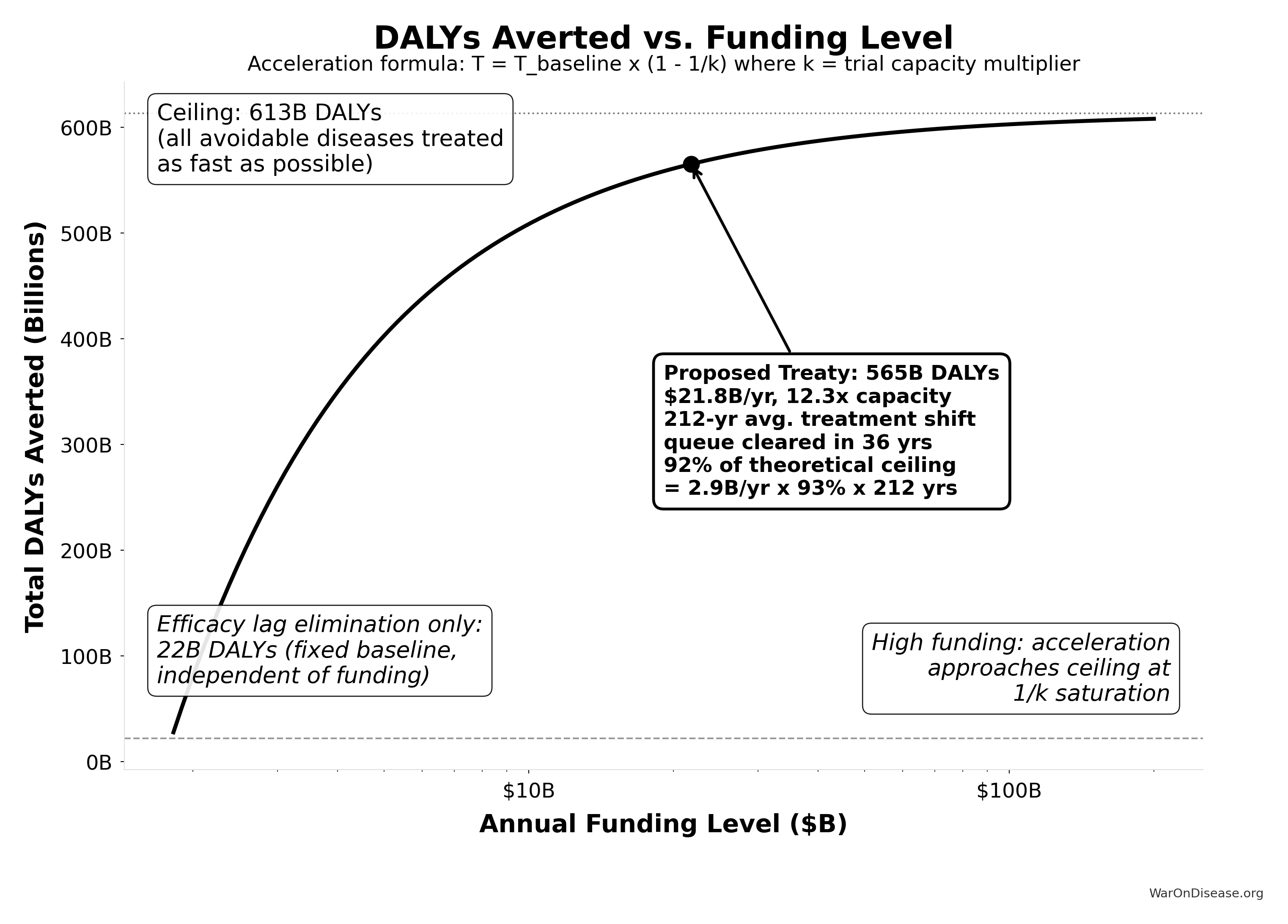

Funding Level vs. Cost-Effectiveness

While the analysis above addresses whether trials produce proportionally more cures, a separate question is how funding level affects cost per DALY averted. The acceleration formula \(T_{accel} = T_{baseline} \times (1 - 1/k)\), where \(k\) is the trial capacity multiplier, produces natural diminishing returns: each additional dollar buys less acceleration as \(k\) grows. Figure 70.1 shows cost per DALY rising with funding, while Figure 70.2 shows total DALYs approaching an asymptotic ceiling.

Verification at proposed funding ($21.8B/yr):

Trial capacity multiplier: 12.3x

Queue clearance: 36.0 years

Treatment acceleration: 203.7 years

Total timeline shift: 211.9 years

DALYs averted: 565.2B

Upfront cost: $270M

Total undiscounted cost: $784.2B

Cost per DALY: $1.39

Asymptotic floor: $1.28/DALY (total queue cost: $783B)

Verification at proposed funding ($21.8B/yr):

Trial capacity multiplier: 12.3x

Queue clearance: 36.0 years

Treatment acceleration: 203.7 years

Total timeline shift: 211.9 years

DALYs averted: 565B

Ceiling DALYs: 613B

Utilization: 92.2% of ceiling

Efficacy lag baseline: 22B DALYsThe proposed funding level ($21.8 billion/year) sits in the steep part of the curve, where cost-effectiveness is strongest. Even at much higher funding levels, the cost per DALY remains far below the bed nets benchmark ($89 (95% CI: $78-$100)/DALY).

Data Sources and Methodological Notes

Cost of Current Drug Development:

- Tufts Center for the Study of Drug Development often cited for $1.0 - $2.6 billion/drug.

- Journal articles and industry reports (IQVIA, Deloitte) also highlight $2+ billion figures.

- Oxford RECOVERY trial: $500 (95% CI: $400-$2,500)/patient (exceptional NHS/COVID conditions). ADAPTABLE trial: $929 (95% CI: $929-$1,400)/patient (typical US pragmatic trial). Our projections use $929 (95% CI: $97-$3,000)/patient based on ADAPTABLE; confidence interval captures uncertainty.

ROI Calculation Method:

- Simplified approach comparing aggregated R&D spending to potential savings.

- Does not account for intangible factors (opportunity costs, IP complexities, time-value of money) beyond a basic Net Present Value (NPV) perspective.

Scale & Adoption Rates:

- The largest uncertainties revolve around uptake speed, regulatory harmonization, and participant willingness.

- Projections assume widespread adoption by major pharmaceutical companies and global health authorities.

Secondary Benefits:

- Quality-of-life improvements, lower healthcare costs from faster drug innovation, and potentially fewer adverse events from earlier detection.

- These are positive externalities that can significantly enlarge real ROI from a societal perspective.

Conclusion

A decentralized FDA transforms the centralized regulatory approach into a global, decentralized model, reducing clinical trial costs by a factor of 44.1x (95% CI: 39.4x-89.1x), accelerating approval timelines, and expanding therapeutic coverage to neglected diseases. The 10-year NPV total cost is $611 million (95% CI: $415 million-$853 million) (upfront plus discounted annual operations), generating $389 billion (95% CI: $326 billion-$484 billion) in net R&D savings. Given that the pharmaceutical industry collectively spends $60 billion (95% CI: $50 billion-$75 billion) annually on clinical trials, a 97.7% (95% CI: 97%-99%) reduction yields an ROI of 637 (95% CI: 569-790):1 at scale.

Beyond direct savings, the effects on medical progress are substantial: expanded therapeutic exploration, real-time treatment effectiveness rankings, and research on off-patent treatments that currently lack commercial incentives. With appropriate privacy protections and international coordination, this framework enables evidence-based personalized medicine at global scale.

Disclaimer

All figures in this document are estimates based on publicly available information, industry benchmarks, and simplifying assumptions. Real-world costs, savings, and ROI will vary greatly depending on the scope of implementation, the speed of adoption, regulatory cooperation, and numerous other factors. Nonetheless, this high-level exercise illustrates the substantial potential gains from a global, decentralized, continuously learning clinical trial and regulatory ecosystem.

Verification: Complete Derivation Chains

For economist verification, this section provides complete derivation chains for all headline figures. Each metric traces back to primary data sources.

Trial Capacity Multiplier Derivation

Result: 12.3x (95% CI: 4.2x-61.4x)

Step 1: Current trial capacity

- Current trial participants: 1.9 million patients/year (95% CI: 1.5 million patients/year-2.3 million patients/year)

- Current first treatments: 15 diseases/year (95% CI: 8 diseases/year-30 diseases/year)

Step 2: Capacity with $21.8 billion/year

- Cost per patient: $929 (95% CI: $97-$3,000)

- Fundable patients: 23.4 million patients/year (95% CI: 9.46 million patients/year-97 million patients/year)

Step 3: Calculate multiplier

\[

\begin{gathered}

k_{capacity} \\

= \frac{N_{fundable,dFDA}}{Slots_{curr}} \\

= \frac{23.4M}{1.9M} \\

= 12.3

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{fundable,dFDA} \\

= \frac{Subsidies_{dFDA,ann}}{Cost_{pragmatic,pt}} \\

= \frac{\$21.8B}{\$929} \\

= 23.4M

\end{gathered}

\]

where:

\[

\begin{gathered}

Subsidies_{dFDA,ann} \\

= Funding_{dFDA,ann} - OPEX_{dFDA} \\

= \$21.8B - \$40M \\

= \$21.8B

\end{gathered}

\]

where:

\[

\begin{gathered}

OPEX_{dFDA} \\

= Cost_{platform} + Cost_{staff} + Cost_{infra} \\

+ Cost_{regulatory} + Cost_{community} \\

= \$15M + \$10M + \$8M + \$5M + \$2M \\

= \$40M

\end{gathered}

\]

Timeline Shift Derivation

Result: 212 years (95% CI: 135 years-355 years)

Components:

| Component | Value | Source |

|---|---|---|

| Efficacy Lag Elimination | FDA drug approval timeline data | |

| Discovery Acceleration | Capacity vs. backlog model | |

| Combined Total | 212 years (95% CI: 135 years-355 years) | Sum of components |

\[

T_{accel,max} = T_{accel} + T_{lag} = 204 + 8.2 = 212

\]

where:

\[

\begin{gathered}

T_{accel} \\

= T_{first,SQ} \times \left(1 - \frac{1}{k_{capacity}}\right) \\

= 222 \times \left(1 - \frac{1}{12.3}\right) \\

= 204

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{first,SQ} \\

= T_{queue,SQ} \times 0.5 \\

= 443 \times 0.5 \\

= 222

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,SQ} \\

= \frac{N_{untreated}}{Treatments_{new,ann}} \\

= \frac{6{,}650}{15} \\

= 443

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{untreated} \\

= N_{rare} \times 0.95 \\

= 7{,}000 \times 0.95 \\

= 6{,}650

\end{gathered}

\]

where:

\[

\begin{gathered}

k_{capacity} \\

= \frac{N_{fundable,dFDA}}{Slots_{curr}} \\

= \frac{23.4M}{1.9M} \\

= 12.3

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{fundable,dFDA} \\

= \frac{Subsidies_{dFDA,ann}}{Cost_{pragmatic,pt}} \\

= \frac{\$21.8B}{\$929} \\

= 23.4M

\end{gathered}

\]

where:

\[

\begin{gathered}

Subsidies_{dFDA,ann} \\

= Funding_{dFDA,ann} - OPEX_{dFDA} \\

= \$21.8B - \$40M \\

= \$21.8B

\end{gathered}

\]

where:

\[

\begin{gathered}

OPEX_{dFDA} \\

= Cost_{platform} + Cost_{staff} + Cost_{infra} \\

+ Cost_{regulatory} + Cost_{community} \\

= \$15M + \$10M + \$8M + \$5M + \$2M \\

= \$40M

\end{gathered}

\]

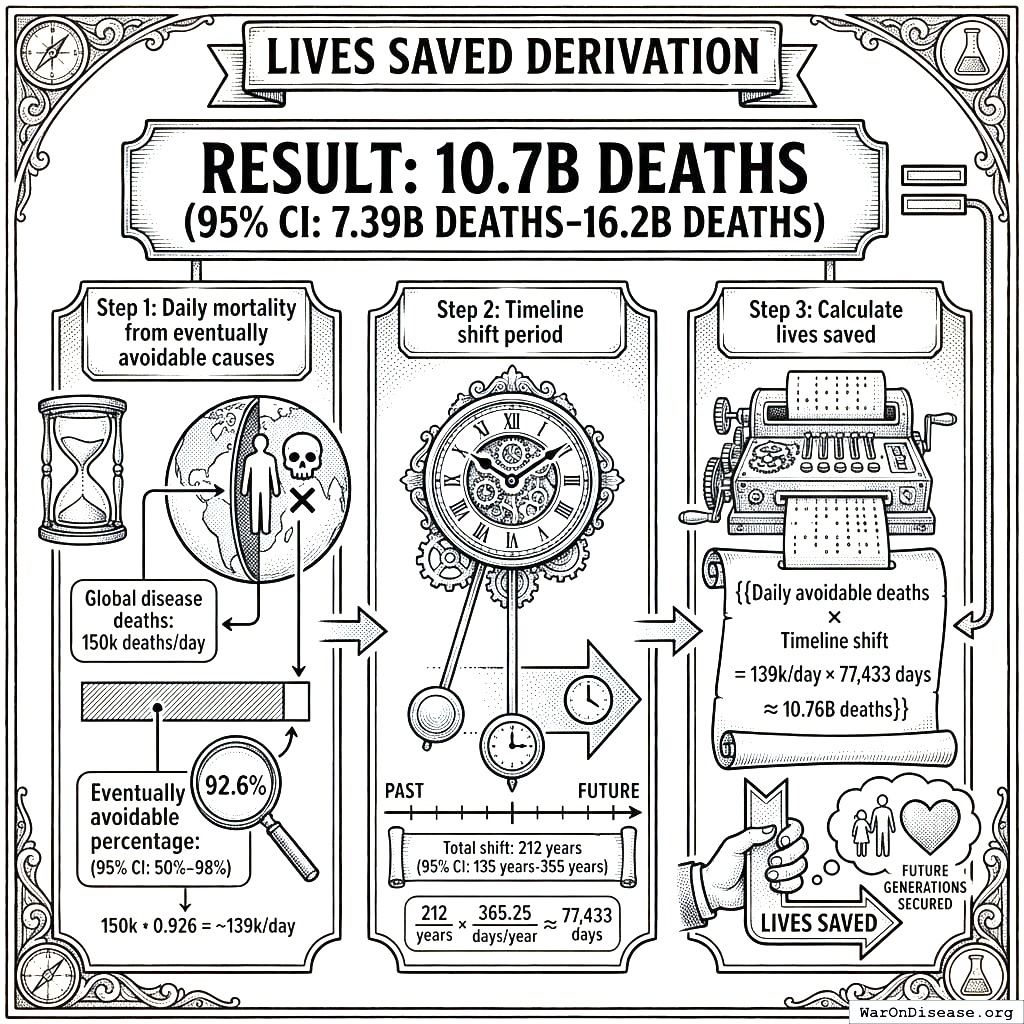

Lives Saved Derivation

Result: 10.7 billion deaths (95% CI: 7.4 billion deaths-16.2 billion deaths)

Step 1: Daily mortality from eventually avoidable causes

- Global disease deaths: 150,000/day5

- Eventually avoidable percentage: 92.6% (95% CI: 50%-98%)

Step 2: Timeline shift period

- Total shift: 212 years (95% CI: 135 years-355 years)

Step 3: Calculate lives saved

\[

\begin{gathered}

Lives_{max} \\

= Deaths_{disease,daily} \times T_{accel,max} \times 338 \\

= 150{,}000 \times 212 \times 338 \\

= 10.7B

\end{gathered}

\]

where:

\[

T_{accel,max} = T_{accel} + T_{lag} = 204 + 8.2 = 212

\]

where:

\[

\begin{gathered}

T_{accel} \\

= T_{first,SQ} \times \left(1 - \frac{1}{k_{capacity}}\right) \\

= 222 \times \left(1 - \frac{1}{12.3}\right) \\

= 204

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{first,SQ} \\

= T_{queue,SQ} \times 0.5 \\

= 443 \times 0.5 \\

= 222

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,SQ} \\

= \frac{N_{untreated}}{Treatments_{new,ann}} \\

= \frac{6{,}650}{15} \\

= 443

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{untreated} \\

= N_{rare} \times 0.95 \\

= 7{,}000 \times 0.95 \\

= 6{,}650

\end{gathered}

\]

where:

\[

\begin{gathered}

k_{capacity} \\

= \frac{N_{fundable,dFDA}}{Slots_{curr}} \\

= \frac{23.4M}{1.9M} \\

= 12.3

\end{gathered}

\]

where:

\[

\begin{gathered}

N_{fundable,dFDA} \\

= \frac{Subsidies_{dFDA,ann}}{Cost_{pragmatic,pt}} \\

= \frac{\$21.8B}{\$929} \\

= 23.4M

\end{gathered}

\]

where:

\[

\begin{gathered}

Subsidies_{dFDA,ann} \\

= Funding_{dFDA,ann} - OPEX_{dFDA} \\

= \$21.8B - \$40M \\

= \$21.8B

\end{gathered}

\]

where:

\[

\begin{gathered}

OPEX_{dFDA} \\

= Cost_{platform} + Cost_{staff} + Cost_{infra} \\

+ Cost_{regulatory} + Cost_{community} \\

= \$15M + \$10M + \$8M + \$5M + \$2M \\

= \$40M

\end{gathered}

\]

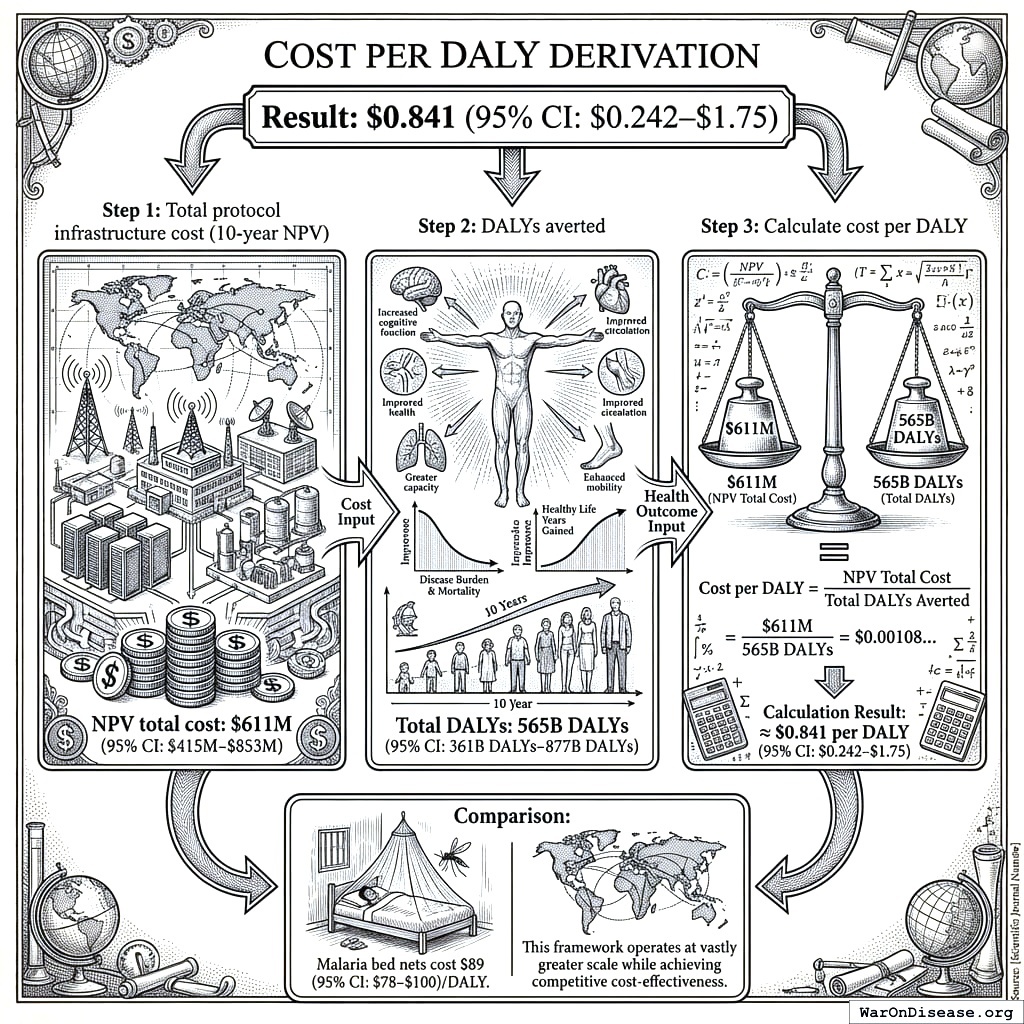

Cost per DALY Derivation

Result: $0.842 (95% CI: $0.242-$1.75)

Step 1: Total protocol infrastructure cost (10-year NPV)

- NPV total cost: $611 million (95% CI: $415 million-$853 million)

Step 2: DALYs averted

Step 3: Calculate cost per DALY

\[

\begin{gathered}

Cost_{direct,DALY} \\

= \frac{NPV_{direct}}{DALYs_{max}} \\

= \frac{\$476B}{565B} \\

= \$0.842

\end{gathered}

\]

where:

\[

\begin{gathered}

NPV_{direct} \\

= \frac{T_{queue,dFDA}}{Funding_{dFDA,ann} \times r_{discount}} \\

= \frac{36}{\$21.8B \times 3\%} \\

= \$476B

\end{gathered}

\]

where:

\[

\begin{gathered}

T_{queue,dFDA} \\

= \frac{T_{queue,SQ}}{k_{capacity}} \\

= \frac{443}{12.3} \\

= 36

\end{gathered}